Energy Efficient Microchips

Interview with

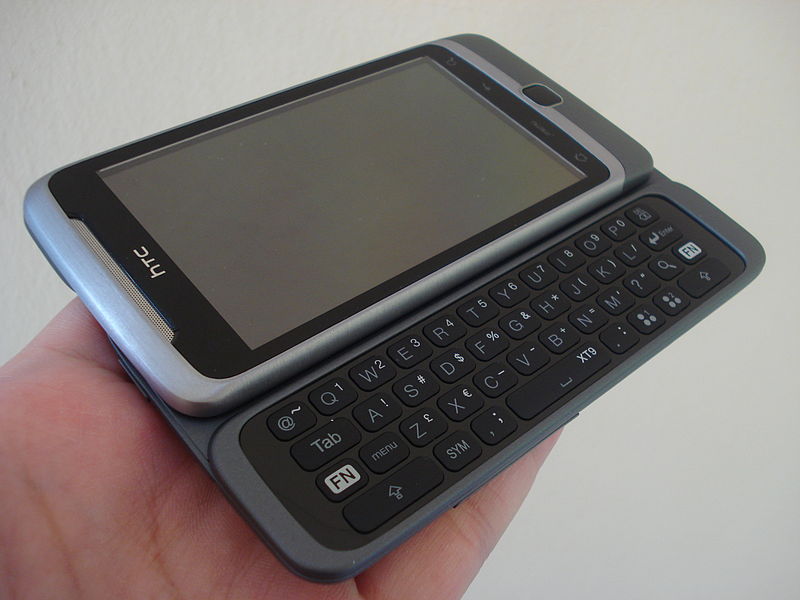

Chris - If you own a mobile device, whether that's a phone or a camera, or a portable music player, then there's probably a 99.9% chance that at least some of the computer chips running inside that device are designed by a company based here in Cambridge called ARM. And if you owned a BBC microcomputer in the 1980s, then you've also come into contact with one of their other products.

They're actually a world leader in developing digital solutions and two of the projects they're working on at the moment are ways to make computer chips smaller and much more energy efficient. Mike Muller is ARM's Chief Technology Officer. He's here with us today. Hello, Mike.

Mike - Hiya.

Chris - Thank you for coming in and joining us on the Naked Scientists. First of all, what actually determines how energy hungry a chip is?

Mike - Well I guess there are three main things. The first is, how big is it? The bigger it is, the more transistors it's got, the more power it takes. So a lot of what we do is how you get those compromises and design something that's big enough for the task in hand, but doesn't overengineer things because that takes excess power.

Chris - Why does ARM account for 99.9% of the marketplace? Why have you got that huge dominance? What is it about your technology that makes it so attractive to all those different industries?

Mike - Well it's two things. The technology is part of it, but possibly more important is the way we went about our business, which is actually not to manufacture anything and just to license our intellectual property to people who do, letting them specialise in what makes them good, and lets us focus on what we do, which is design low-power microprocessors.

Chris - But at the same time, the design must have something going for it, or all those licensees wouldn't use the technology, so what is it that they're going for?

Mike - We've put in a lot of work for how you come up with new techniques to save power. So, a lot of people in the past have focused on how do you make a chip go as quickly as possible. If you try and make it go as fast as it can, you end up actually burning a lot of excess power. If you back off just a little bit and make slightly different compromises, you can come up with solutions that take a lot less power.

Chris - And of course, when we're talking about things people want to carry around with them, the batteries are the vast bulk of the weight and were the thing holding back the technology in the early days because the more powerful you make them, the more energy they're going to get through; so they're going to burn off batteries more quickly. So, if you've got more efficient chip designs, that's got to be a good thing.

more powerful you make them, the more energy they're going to get through; so they're going to burn off batteries more quickly. So, if you've got more efficient chip designs, that's got to be a good thing.

Mike - Absolutely. I think, recently, there's been a change from people designing for what's the absolute power you can have, to [desiging for] low power devices, for batteries. And in the future, it's actually not the battery that's the issue, it's the available energy. When you start to have really tiny devices embedded into things all around you, you're scavenging energy from the environment, you actually need to worry about what's the available power, and that could be very low indeed.

Chris - So how can we get the energy requirements of the chips of tomorrow down? What sorts of technologies are you guys working on, in order to make that a reality?

Mike - One of the techniques we're working on involves lowering the voltage that a chip runs at, because power is actually proportional to voltage squared; so it's one of the most important things you can do to lower power. If you took

the article before, when somebody designs a process, they work out how it works then put a little margin of safety. Then you heard about how you take the transistors and you build a few gates, and the people that design that put in a little more margin of safety. Then we come along and design our processors and put in a little more margin of safety, and then somebody puts it all together in the chip. What you end up with is a safe chip that you know works all the time and it possibly is then specified to run at 3 volts. In reality, it might run at 2 ½ volts, and the difference between 2 ½ volts and 3 volts is 50% in power.

Chris - Because it's squared.

Mike - Because it's V squared. So, what you really want to do is run that chip at 2 ½ volts. People who over-clock their PC sometimes say, "I actually know that this chip can go faster than it does. I'll turn it up and run it faster." And the problem they have is, on a hot day, it might get too hot and then it stops working. So the idea is something we borrowed from the mobile phone industry where they said, "radio signals are really noisy. We have to have error recovery because you get glitches and noise." And so when you're with a mobile phone, transmitting to a base station, you actually turn down the power of the transmitter, until it starts making mistakes. The error recovery cuts in, and you can then recover that and you don't notice, and then it turns the power up as you move further away.

Chris - So it's dynamic error recovery, isn't it - your chips will run at a threshold where they're just about not making any mistakes, and you're engineering that into the chip, rather than into a software that's running through the chip.

Mike - That's absolutely right and most digital designers are really uncomfortable with the idea that their chip will make mistakes. So, we've designed a processor which will correct it's errors dynamically which allows you to turn it down until you find that point where it just starts making mistakes. As long as the power to recover from those errors is better than the power you've wasted, you're ahead of the game.

Chris - The benefit of doing this kind of thing would be that not only are you using less energy because the voltage is down, but also, that means you can actually make the chip bigger and do more, so actually, the device it's in, can become more powerful without having to burn off more energy.

Mike - Well, we're always in a race; the software guys want to write more complicated software and therefore they want more power, while we'd like to have a static world where you could make things simpler and smaller. So there's always a balance between actually designing a lower power device and then finding somebody that's just made it all run faster and used that all up again.

Chris - So when a company like ARM says, "Right. We're going to come up with this sort of design." How long from the concept to it appearing in a phone like mine, sitting here on the desk?

Mike - Well we started work on this with the university... about 7 years ago... was when you could point to the first germ of an idea, and I reckon it's going to be another 3 or 4 years until you see those kinds of things in real products. So it's a long time from good idea through to actual product in the market.

Chris - When we do see it in the market, what sort of difference will it make? What will you be able to say to designers of equipment that means that they will say, "Well yes, okay. We'll definitely invest in this and this is the benchmark in the future?"

Mike - You'll probably, as a user, never know. It'll be just one of these techniques that means the next phone you buy has slightly longer battery life and does even more things than it did before, and you'll just take it for granted that that's technology marching on its forward progress...

Comments

Add a comment