Mind-reading MRIs

Interview with

Chris - Thank you for joining us on the Naked Scientists. Can you tell us first of all, what actually is fMRI? How does it work?

Jack - Well fMRI measures brain activity, but it does it rather indirectly. It doesn't measure the activity of neurons in your brain. Instead, it measures changes in blood flow in your brain. So, when your neurons fire, they need to use energy and they get their energy by burning glucose with oxygen, and they extract glucose and oxygen from the bloodstream continuously as you think. And the areas of your brain that are more active, meaning more neurons are firing, tend to extract more oxygen and glucose from the bloodstream and we can measure this [blood flow] using MRI.

Chris - In other words, it's a correlate of what the brain is doing so when we present a stimulus at the brain, and we look at which bits of the brain are using more oxygen through the signal in the fMRI scanner, that tells you that bit of the brain must have something to do with the stimulus that we're presenting and how it's being decoded.

Jack - Right. It's a rather indirect measure of something that's correlated with neural activity and it's the best measure we have right now, the best non-invasive measure of brain activity, but the problem is that it has fairly low spatial and temporal resolution, relative to the neurons themselves. So you're losing a lot of information or you can't recover a lot of the information that's actually going on in the brain. Still, it's the best tool we have at the current time.

Chris - So actually talk us through what it is that you're doing and how you're analysing the signal that comes back from the brain? What sort of resolution can you get?

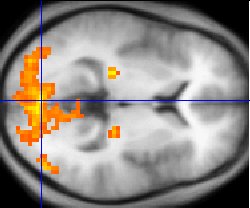

Jack - Well the typical functional MRI experiment that people do today will recover information from a small area of the brain - a small resolution of the brain in about 3 x 3 x 3 millimetres. And so, your brain is essentially divided into a large number of cubes - tens of thousands of these little 3 x 3 x 3 millimetre cubes called 'voxels' and for each individual voxel, we can build a model that describes how your brain encodes information. So for example, if we put you in a scanner and we show you pictures or movies, we can construct these models called 'encoding models' and each small little voxel in the brain will have its own unique encoding model that describes how the movies or the still images are translated into changes in blood flow in that small region of the brain.

Chris - And what does that actually tell you about the underlying structure of the brain in that region, the fact that you've got these little cubes and they're changing their activity? What can you infer about brain activity from the patterns of activity that you see in the scanner?

Jack - Well, the encoding models essentially tell you how the external world is translated into changes in blood flow. So if you show someone thousands and thousands of still images or say, a few hours of movies, you can actually build a model that's quite general and it describes how any possible visual stimulus that you could show that person gets translated into blood flow. Now once you have that model, you can then demonstrate that it's working by using it to identify on each individual second which movie or which picture they saw. Now once you verified that the model is accurately identifying the image or movie they saw, you can actually show the subject a completely new movie or image that they never saw before and you can actually reconstruct that movie or image from the blood flow that you've measured.

Chris - Does this give us clue though how the brain is actually wired up, so when you look at how the brain responds to a picture you show, does this inform the way in which it's decoded or deconstructed cognitively to then present to consciousness, what we're seeing when we experience that stimulus?

Jack - Well absolutely. In fact, that's the entire point. The brain processes visual information with a large number of different brain areas. There's probably something between say, 50 and 75 different brain areas that are involved in visual function, and the goal of our lab is actually build computational models that describe how all these different brain areas work. So, when you undertake to build these encoding models, what you're really trying to do is construct a quantitative theory of the way the brain processes visual information. And decoding is simply a way of verifying that your theory is actually correct. If you have a correct encoding model that describes how information is translated into patterns of brain activity, then you should be able to decode it accurately and reconstruct the stimulus that this person saw. But that's sort of a side effect and it's actually just a mathematical trick. The main goal is to build an accurate encoding model and once you have that, decoding sort of comes along for free.

Chris - And if I compare how the brain of one person responds to a picture postcard and then I present the same picture postcard to a second subject, do you get broadly the same activity in the brains of the two individuals? In other words, could you build a system that will pretty well work out what they're seeing or is it so end-user specific that you'd have to train the system to look at each individual in order to do it with any particular level of accuracy?

Jack - Well the answer is somewhere in the middle there. Individual brains vary in huge amounts, just like individuals have different heights and different weight. The size and shape of individual brains varies a lot. So if you build a sort of generic model that you can apply to any brain, you will be able to decode some coarse information, but the model will never work particularly well. If you want to recover a lot of information from someone's brain, you're going to have to build a unique model for each individual person's brain.

Chris - So tell us about the experiments that you've actually done to show that this can be done. It can be done successfully.

Jack - Well our published experiments have involved static images and the procedure is pretty simple. We put somebody in the magnet and we show them 2 to 5 hours of images. These are just flashed every few seconds and they passively view them and after we get a large enough data set, then we can construct these encoding models on a computer and then we simply put them back in the magnet and we show them new images they've never seen before, and we use the computers to reconstruct the images that they actually saw. In our more recent work that has not yet been published and is still in peer review, but we've shown that you can do this with movies as well and that was kind of an interesting challenge because the blood flow signals measured by fMRI are very, very slow. You're only getting a snapshot of the brain once every 1 to 2 seconds. And at that rate, it's actually quite a challenging problem to try to reconstruct the motion of natural visual stimuli.

Chris - So how are you getting around that? Are you going for things like, if there's a very, very apparent thing in the movie which triggers a certain response in that person, say the person sees a post box or a cat and their brain is going to respond strongly to the post box or the cat, is it that that you're picking up rather than the fact that you've got a moving image going across and lots of stimuli being presented sequentially?

Jack - It's actually both. So, your brain has probably something on the order of 50 to 75 distinct visual areas. No one's really sure exactly how many visual areas there are. What you ideally want to do is build an accurate encoding model for each individual visual area and that encoding model will describe the way information is processed in that specific visual area and will describe the features in the natural images or natural movies that are essentially represented explicitly in that area. And once you have those individual models, then when you do decoding, you aggregate information from all of these different visual areas. So visual areas that are sensitive to motion, but they don't care what the moving object is, then you'll reconstruct the absolute motion from those areas. Other visual areas that may be more involved in semantic information like they may respond to people talking or to vehicles moving, they'll reconstruct the semantic category from those areas and then you aggregate all that data together to produce a reconstruction.

Chris - And if you can get this working at an even better resolution you do at the moment, what are the big questions that you now want to go on and answer with this tool?

Jack - Well again, the main goal here is to build encoding models and what we want to do is to be able to perfectly predict all of the brain activity in as much of the brain as we can. So, we haven't gone particularly far in that part yet. We are still in the process of building more and more accurate encoding models especially for that more mysterious visual areas and that work will be going on for quite some time. But all of the mathematical algorithms we use for constructing the encoding models and for doing decoding can be applied pretty much anywhere in the brain. So for example, you could imagine building encoding models for auditory areas, for recovering music and speech processing. You could imagine building these models for the frontal areas of the brain that are involved in abstract thought and that work will be going on for a long time because there are few computational theories that neuroscientists have right now that describe accurately how the higher order sort of more mysterious parts of the brain actually work. Right now, we're working at the visual system because it's relatively easy, relative to all of the other parts of the brain. We all know what visual system does, we have some rough ideas of how it's laid out, and we can control the input very carefully. If we try to build a model of the front part of the brain where you think about your future and you plan, and all those sorts of things, it's both very difficult to model those areas and it's very difficult to control the input to those areas. So, progress in building encoding and decoding models for more abstract parts of the brain is going to proceed much, much more slowly.

Comments

Add a comment