What are Supercomputers used for?

Interview with

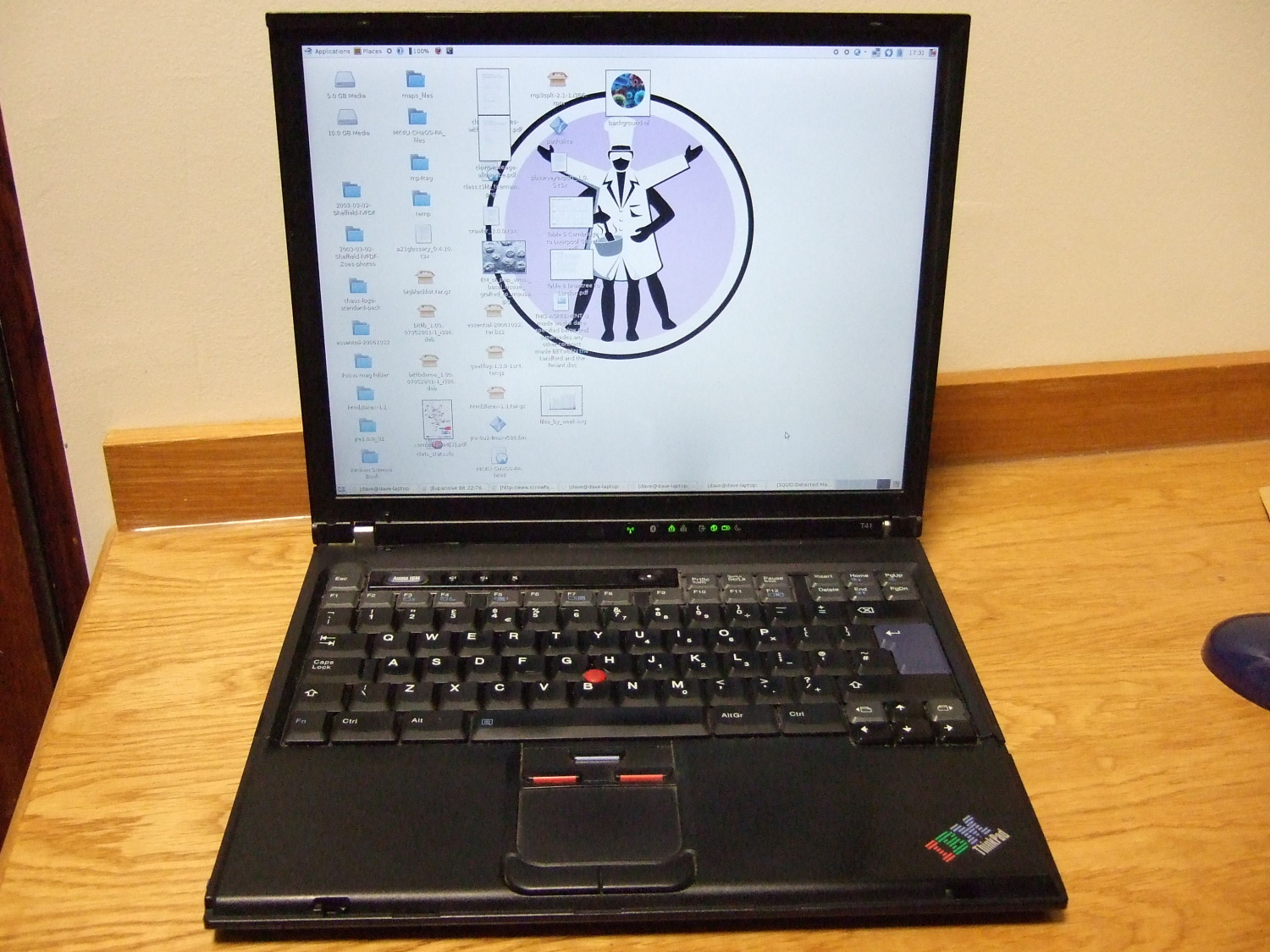

Dave - The Cambridge University high performance computing resource, or HPC, provides computing facilities to researchers at the university. Paul Calleja, Director of the HPC showed me around their computer system called Darwin...

Paul - What you're looking at is a rack of commodity X86 servers, so we have 32 servers in a rack and each rack in total contains 128 processors which you might find in your desktop machine at home.Dave - So I'm guessing with a supercomputer the important thing to do is somehow to connect these altogether. There appears to be some - what look like standard network cables and there are some other connectors which I don't actually recognise...

Paul - We have standard ethernet networks for our data and our administration and then a specialist network called Infiniband for our parallel processing. So the Infiniband network is particularly high bandwidth and low latency so the time it takes to get a message from one server to the other is very low and the amount of data we can send is very high.

Dave - So this is very important if you've got computers on different sides of the room which if they work on the same problem, they might want to communicate and doing that as quickly as possible is very important.

Paul - Yes. So in total, we have over 800 servers in this one room. Housed in those servers is approximately 4,000 CPU cores and all servers can talk to all servers at once with a bandwidth of about 3 Gigabytes per second.

Dave - So what's the advantage of using large amounts of essentially standard hardware over something a lot more proprietary?

Paul - The standardisation has led to a dramatic decrease in price, so the price point has dropped to 100 fold and also, the rates of the advance of the commodity market is very high. So we get a double in their performance every two years which is given to us for nothing from the general advances in the computer industry.

Dave - And to explain more about Darwin, Paul joins us here in the studio. Hi, Paul.

Paul - Hi, Dave.

Dave - So how does Darwin differ from HECToR,

which we were talking about earlier, in Edinburgh?

Paul - Both Darwin and HECToR are best in class, tightly coupled supercomputers which means they're designed for the maximum performance in how messages are sent around the machine. In that respect, they're the same. Darwin differs from HECToR in that Darwin is a commodity machine made from standard off-the-shelf components whereas HECToR is a proprietary machine. The processors in both machines actually are commodity but Darwin has a commodity interconnect whereas HECToR has a proprietary interconnect. So that interconnect which has been spoken about that makes a supercomputer super is open in Darwin and basically, we own all the technology and how it's put together whereas in HECToR, your vendor owns the technology.

Dave - So I guess this is an advantage. It means you get some more flexibility and it's cheaper because if anyone can build the kit, it's going to be a lot cheaper then.

Paul - Yeah, the more of value add that you own yourself, the less value add the vendor can charge you for, so you can drive price points down and also, the rate of advancement is quicker and so, this has led to a great improvement in supercomputing over the years.

Dave - So what are you actually doing here in Cambridge with your supercomputer?

Paul - So Cambridge is primarily used for research in the University of Cambridge and we have over 400 users on the machine from 65 research groups. Those guys have generated around 300 publications in the last 4 years, and they're very active doing all kinds of science. I can tell you about some of that.

Dave - So essentially, they decided they wanted to do something, they then write some code and send it to you and run it?

Paul - Yeah, there's a range of activity. So for example, a recent new activity on the machine is the UKQCD consortium. So this is a consortium of researchers from UK; Glasgow and elsewhere, and they're doing very complicated calculations looking at the nature of matter. They're looking at the strong interactions known as quantum chromodynamics, comparing that calculation to experimental data that they get from the LHC experiment in CERN. And this is very common so your scientist has a theory, he has calculations to try to substantiate that theory, and then he compares it to experiments. These calculations can be very large.

Dave - So quantum chromodynamics in the strong force of the forces which hold the nucleus of an atom together of things.

Dave - So quantum chromodynamics in the strong force of the forces which hold the nucleus of an atom together of things.

Paul - Yes, exactly.

Dave - I've heard that actually calculating them has been almost impossible for a long time.

Paul - Yeah, the grid cells that you're talking about are million, million points in your matrix that you have to calculate and they need to calculate that over and over again, and then they get expressions of atomic mass which they can compare very accurately. If the two numbers coincide, you know that your theory is correct. And so, this is what simulation is used for in many different areas.

Dave - So what else are you up to?

Paul - So other things we're looking at, we have a collaboration with the hospital, Addenbrookes, where they have a gene sequencing facility in the hospital and they have lots of these next-generation gene sequencing machines to look at your gene sequence for particular disease cases. They generate all the data up there at the hospital and send them over a 10Gb Ethernet link to our machine in the centre of town, we process the data, and then send them back the answers.

Dave - So they're attempting to compare lots of different genomes and find out which bits of the genomes are associated with the different diseases?

Paul - Yeah. In the clinic now, it's quite common that you may well be genotyped for particular diseases and you need those answers back quickly. They don't have the facility of doing the calculations at the hospital and we do, so we've partnered with them for doing that. So that's quite an interesting one.

Another interesting project just recently is the Planck satellite. So the astronomy department in Cambridge are collecting lots of data from the satellite called Planck on the Cosmic Microwave Background Radiation. That's an awful lot of data and that gives you information about the early universe. So again, we get sent those data, we calculate it with lots of Monte Carlo simulations and then send them back the results.

Dave - So everything you've suggested at the moment has involved large amounts of data and difficulties handling it, that sort of thing?

Paul - Yeah, well this is often spoke about recently in terms of the "data deluge". The data was increasing incredibly fast - the quantum chromodynamics guys filled up a hundred terabytes of discs in about 4 months. That was meant to last them three years! So we now have around 600 terabytes of data. Next year, we'll have a petabyte, a petabyte is a thousand terabytes, and it's just exponentially growing. In fact data requirements are growing five times faster than our computer requirements.

Dave - So that's going to a big challenge in the future.

Paul - Yeah. The major challenge at the moment is how you architect your system to allow all that data to flow from the computer to the data and then once the data has flowed there, what do you do with it, how do you store it, how do you analyse it, how do you keep it?

- Previous How do Supercomputers Work?

- Next The World Community Grid

Comments

Add a comment