Why high voltage?

Ingredients

Power is moved around the country from power stations to the places that need it using a system of electrical wires called the National Grid. In some parts of the grid, electricity is transmitted at 400,000 volts before being "stepped down" to the 240 volt mains that is supplied to homes. But why are these very high voltages used? Why not just use 240 volts in the first place? The answer is to minimise the amount of energy that is lost when the electricity flows along cables, as this demonstration shows...

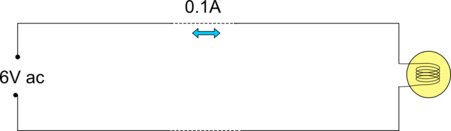

To model the national grid working in a safe way we used a 6V ac power supply as a power station, a light bulb and some wires with a large resistance (we added a 300Ω resistor) as the long power cables. The bulb turned out very dim.

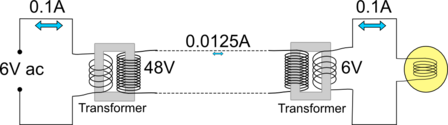

We then added two transformers which increased the voltage by a factor of 8 through the power cables, and then converted it back to the 6V, which made the bulb a lot brighter

Explanation

We use electricity as a way of moving energy from the place where it is produced - normally a power station, or a battery - to where we want to use it - often the home or workplace.

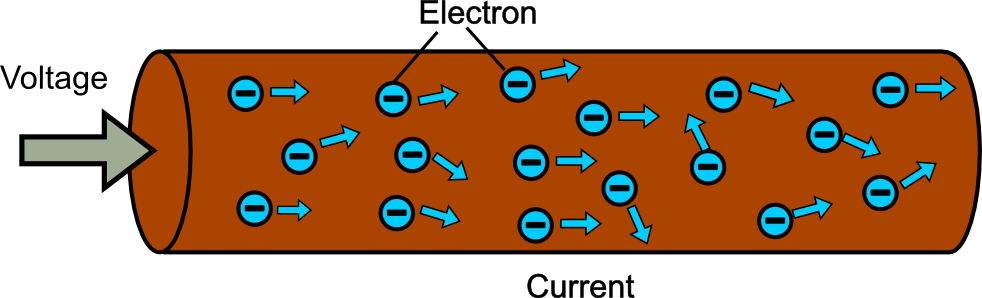

Electricity is the flow of particles called electrons through a material, and the amount of electrons flowing past per second is known as the electric current.

The electron are forced to move by a voltage, which is essentially how hard the electrons are being pushed along the wire.

The power or energy flowing through the wire is the current multiplied by the voltage

power = current x voltage

This means that you can transmit the same amount of power with a low voltage and a high current or a high voltage and a low current.

At 6V the bulb uses 0.1A of current

If the voltage is multiplied by 8 to 48V the current will be one eighth as large (0.0125A).

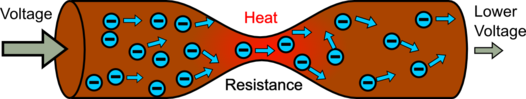

The wires resist the flow of current, a bit like friction resisting your motion as you slide across a floor. It takes effort (voltage) to push a current through a wire, the smaller the wire the more effort it takes.

This converts electrical energy to heat so there is less energy left to do something useful at the other end. The larger the current the more energy is lost. So transmitting the power at 6V with a high current will waste more energy than at 48V with a low current, so the bulbs are brighter with the transformers.

The higher the voltage the less energy you waste, so power is m oved long distances at very high voltages as high as 400 000V in the UK, but this produces other problems. If the voltage is very high an electric current can flow though the air creating sparks, and can easily flow through your body electrocuting you, so everything has to be designed carefully and made very large.

oved long distances at very high voltages as high as 400 000V in the UK, but this produces other problems. If the voltage is very high an electric current can flow though the air creating sparks, and can easily flow through your body electrocuting you, so everything has to be designed carefully and made very large.

So different voltages are used in different places depending on the amount of power that has to be transferred and how easy it is to make the high voltages safe. So power is transmitted around the country at 400 000V, around a region at 132 000V, around a town on wooden poles or underground at 11 000V, and to your house at 240V

- Previous Making Pyrex Invisible

- Next Is your head this big?

Comments

think this is a magic trick.

think this is a magic trick.

Give equations connecting

Give equations connecting heat loss due to higher voltage and higher current for same power supply

How about you actually do

How about you actually do your homework yourself, and then ask us if we think you've done it right?

It's Ohm's law. You can look

It's Ohm's law. You can look up the formula. Basically, you square current and multiply by resistance. So if you raise voltage, you lower current, and lower power loss by a factor of current squared. In other words, there is a logarithmic reduction in power loss when you increase voltage.

Add a comment