This week, we'll strip computer science down to it's components and find out what we should expect to see in the next 5 years. We find out about the thinking behind artificial intelligence, what the future holds for Second Life and how neuroscience can help us build truly intelligent computers. Plus, get your sunglasses out early this year for Kitchen Science where we make an LCD monitor vanish.

In this episode

Researchers stimulate Parkinson's Breakthrough

Scientists have stumbled upon a new way to help patients with Parkinson's to move more easily.

Writing in this week's Science, Duke University researcher Miguel Nicolelis and his colleagues have used experimental animals to show how a simple nerve stimulation technique can overcome the symptoms of the disease.

Writing in this week's Science, Duke University researcher Miguel Nicolelis and his colleagues have used experimental animals to show how a simple nerve stimulation technique can overcome the symptoms of the disease.

Patients with Parkinson's, which is caused by the loss of brain cells that produce the transmitter chemical dopamine, characteristically develop symptoms of slow movements and also find movements hard to initiate. The symptoms can be partially overcome with drugs including L-DOPA, which works by boosting brain dopamine levels, although the treatment tends to become less effective with time and can also cause significant side effects. In recent years scientists have been able to provide some relief to sufferers by implanting stimulating electrodes deep within the brain to increase the activity in the neural circuits that control movements. However, this surgery is highly invasive and therefore risky. Instead Nicolelis and his team took a different approach. They implanted epidural stimulators in the main sensory pathway of the spinal cord and found that animals previously paralysed by the disease showed a remarkable improvement in symptoms. "We see an almost immediate and dramatic change in the animal's ability to function," says Nicolelis. The team think that the approach, which is much simpler and safer than implanting electrodes deep in the brain, works by blocking abnormal patterns of brain activity seen in the disease. The team think that waves of nerve activity caused by the synchronous firing of many different nerve cells build up and make it very difficult for the brain's motor circuits to initiate movements. But stimulating the sensory pathways three hundred times per second disrupts these neurological oscillations resulting in Parkinsonian animals showing twenty-six times more spontaneous movement than untreated controls.

"Following stimulation the neurons desynchronise resembling the firing pattern you would see in a healthy mouse is continuously moving," says study co-author Per Petersson.

Omega 3 – It might be good for you, but it’s definitely bad for fish

The health benefits of eating the Omega-3 amino acids found in fish may not outweigh the cost to the oceans of our continued fishing, according to an analysis in the Canadian Medical Association Journal this week.

Dr David Jenkins argues that although some studies show that eating fish rich in Omega 3 oils can prevent heart disease and other chronic illnesses, the evidence is not hugely convincing compared to the evidence for the dramatic falls in fish stocks worldwide.

Looking at the results from many individual studies, along with meta -analyses which themselves take many studies into account; they found that there is a suggestion that higher Omega-3 consumption could lead to a 15% benefit in the prevention of cardiovascular disease. Some of the studies they looked at found benefits in only a few situations, and follow up studies occasionally showed the benefit to have reversed 3 years later.

In contrast, the evidence for falling fish stocks and collapsing populations is as compelling as it is frightening. Fish catches have not increased since the early 1990s, despite increased fishing effort, and the percentage of collapsed stocks has been increasing exponentially since the 1950s.

There are also socio-economic factors to consider, such as the fact that collapsed fisheries in the United States, Europe and Japan mean that these countries are increasingly relying on importing fish from developing countries. This means that these countries either have to allow foreign fishing fleets into their waters, or export their fish to foreign markets, depriving local communities of an important source of protein. Food security is just one contributing factor to political and social instability, and these countries often face nutrition and health challenges.

Even farming fish may not be the solution. To farm Salmon, Blue fin Tuna or Sea Bass, you need to feed them a high-protein diet of fishmeal and fish oils - ironically, farming fish puts even more pressure on wild fish stocks, and it actually takes between 2.5-5kg of feed fish for every 1kg of farmed fish produced.

There is a potential solution - bacteria, and genetically modified yeasts and plants may be able to supply our Omega-3 needs, but these sources have not been properly investigated to determine what doses would be healthy, and cannot yet supply the demand.

The report concludes by saying: "Until renewable sources of long-chain omega-3 fatty acids become more generally available, it would seem responsible to refrain from advocating to people in developed countries that they increase their intake of long-chain omega-3 fatty acids through fish consumption."

Food for thought.

Ref: David J.A. Jenkins, John L. Sievenpiper, Daniel Pauly, Ussif Rashid Sumaila, Cyril W.C. Kendall, Farley M. Mowat , Are dietary recommendations for the use of fish oils sustainable?; Canadian Medical Association Journal; March 17, 2009; 180(6); pp. 633-637

Sutures of the future

Sutures of the future might well be deployed by a portable ink-jet printer according to scientists in the US. University of North Carolina at Chapel Hill researcher Roger Narayan and his team, with a view to finding better ways to close wounds, have been investigating the sticking power of a collection of proteins used by marine mussels to anchor themselves to the seabed.

As anyone who has ever tried to prise a mussel off a jetty or a rock knows only too well, these proteins are extremely powerful adhesives. "They're based around the amino acid DOPA," explains Narayan, "and because they are a naturally-occurring protein mixture they are likely to be much better tolerated by the body than sutures or artificial tissue glues like cyanoacrylate (superglue) which has been used in the past but can cause toxic effects and doesn't break down in the body". But a key question is how to utilise and deploy these sticky molecules to get them into wounds or surgical sites so that they can join tissues together.

As anyone who has ever tried to prise a mussel off a jetty or a rock knows only too well, these proteins are extremely powerful adhesives. "They're based around the amino acid DOPA," explains Narayan, "and because they are a naturally-occurring protein mixture they are likely to be much better tolerated by the body than sutures or artificial tissue glues like cyanoacrylate (superglue) which has been used in the past but can cause toxic effects and doesn't break down in the body". But a key question is how to utilise and deploy these sticky molecules to get them into wounds or surgical sites so that they can join tissues together.

Writing in the Journal of biomedical Materials Research B, Narayan and his colleagues may have found the answer - an ink-jet printer head. This uses a vibrating piezo-electric crystal to spit out tiny droplets. The team have found that mixing small amounts of iron ions with the mixture results in a very strong glue, probably by encouraging the mussel proteins to stick to surrounding tissue rather than itself.

"You can foresee hand-held devices in the future that could spray the correct combinations of the glue mixtures onto wound surfaces," says Narayan. "The use of the inkjet technology gives you greater control over the placement of the adhesive. This helps to ensure that the tissues are joined together in just the right spot, forming a better bond leading to improved healing and less scarring."

Tiger Stripes As ID

New Software can identify a tiger from its pelt, helping to catch poachers out in the act.

A tiger's stripes are unique, much like our own fingerprints, so this means that individual tigers can be identified from its colouring.

A tiger's stripes are unique, much like our own fingerprints, so this means that individual tigers can be identified from its colouring.

Lex Hiby, from Conservation Research Limited, has developed a software system that uses images taken by camera traps, and stitches photos together to build a three-dimensional map of the markings, all the way from the neck to the base of the tail. This 'map' can not only let us identify individual animals in the wild - helping to get accurate population numbers, but can also be flattened and used to identify skins traded on the black market. This has the added benefit of knowing where and since when the tiger was killed, helping trap poachers in the act.

It's an accurate technique too. From a collection of between 264 and 298 tigers, the software correctly matched 95% of images that belonged to the same animal.

The idea behind this, using pattern recognition to identify individual animals, has been used for several different species, such as grey seals, cheetahs, penguins and whale sharks. The beauty of pattern recognition is that you do not need your photos to be uniform. In fact, tourist photos have been used alongside those taken by researchers to show that numbers of whale sharks have increased by 1.7% over the last 12 years, according to Jason Holmberg of research organisation Ecocean.

Hiby is confident that this software could make the backbone of a central database, as he writes in this week's biology letters:

"An image of a skin that had been taken from one of the tigers in that database could be traced within a few minutes to where and when the living animal was last recorded." It's a simple software solution to help in the fight to protect this endangered species.

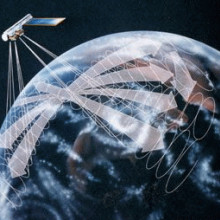

12:26 - Prospecting the Gravity Field

Prospecting the Gravity Field

with Chris Hughes

Chris - Also this week, the European Space Agency has launched the Gravity field and steady-state Ocean Circulation Explorer, or GOCE for short. They say it's going to bring about a whole new level of understanding of one of the Earth's most fundamental forces of nature: the gravity field. Dr Chris Hughes, from the Proudman Oceanographic Lab, is down here on Earth with us and is planning to use the data from GOCE to better understand the world's oceans. Hello Chris. Welcome to the Naked Scientists. Tell us, what is GOCE? How does it work?

Chris H - If you imagine having six metal cubes in a box in orbit round the Earth. Because they're all in slightly different places, in slightly different bits of the gravitational field they move differently. Ideally what you'd want to do is track them and measure the relative motion. That tells you how the gravity field is changed from one place to another. You can't do that because they rattle around and bounce off the walls so instead you hold them still and measure the force that you need to hold them still.

Chris H - If you imagine having six metal cubes in a box in orbit round the Earth. Because they're all in slightly different places, in slightly different bits of the gravitational field they move differently. Ideally what you'd want to do is track them and measure the relative motion. That tells you how the gravity field is changed from one place to another. You can't do that because they rattle around and bounce off the walls so instead you hold them still and measure the force that you need to hold them still.

Chris - What sort of orbit is this satellite in? Is it going over the poles so the Earth is effectively turning under it and this means effectively over the course of a one month period it scans the entire of the Earth's surface?

Chris H - That's right. It's not quite over the poles, it's about 6 degrees off but it covers almost all the Earth, yes.

Chris - Why is this useful to you as an oceanographer? What can we learn by studying the Earth's gravitational field?

Chris H - We want to know what the ocean currents are doing. We can learn an awful lot about those from sea levels, whether the sea surface is sloping or not. It's rather like isobars on a weather map depending on which way the wind is blowing. The sea level will tell you which way the currents are going. If you want to know whether the sea's sloped you need to know which way is up. We know it pretty well, obviously, but we don't know it well enough. We're talking about very small gradients: 1 in 1,000,000 is the gradient that matters. So you need to know very precisely what the gravitational field is in order to define the direction of up.

Chris - Given that the Earth is a sphere why don't we just see gravity being uniform everywhere across the Earth's surface?

Chris H - Because the Earth isn't quite a sphere. Every mountain, every bump, every different mass around the Earth has its own bit of gravitational attraction towards it. If you actually look at the shape of the sea surface what you see is a whole set of wrinkles and bumps and it really looks like a map of the sea floor. Every mountain on the sea floor is pulling the water towards it. What you think of a nice smooth, round sphere is actually quite wrinkly and bumpy when you look on the very small scale.

Chris - Understanding these currents, how will this inform us about what's going on in the oceans?

Chris H - The ocean is almost half of the climate system. It carries heat around from the equator to the poles in just the way the atmosphere does. It keeps part of the Earth warm, cools other parts and is very important for things like fisheries but also climate in Europe in particular. It's very difficult to measure. There's so much going on in the oceans it tends all to happen on smaller scales than it does in the atmosphere. There are fewer measurements; it's harder to see into. We can't measure much in the ocean from satellites. These measurements of currents are really going to give us a huge amount of new information about the basic patterns of flow in the ocean that allows us to understand how the heat gets transported around.

Chris - How long is the acquisition of the data going to take? How long before you can come back on this programme and tell us - this is what we've found?

Chris H - It's going to be at least a year. It's going to take six months or so before the whole system is celebrated and has got down to operational measurements. There's a lot of work where the data has been collected; turning that into useful information so that we can calculate the sea level relative to the gravitational field. There may be some early results somewhere around Christmas time but it's going to be many years down the line before we've got perfect observations that we can get out of it.

Chris - That was Chris Hughes, from the Proudman Oceanographic lab.

Can we dig our way from the UK to Australia?

Chris - I think the answer is probably not - purely because of pressure and temperature constraints. Also the fact that the Earth is pretty liquid inside as far as the inner core. Therefore you'd have to contend with huge amounts of pressure, huge amounts of temperature and I don't think we have the energy or the building materials that would be capable of withstanding that. If you think about it the earth has a radius of 5000-6000km. If we're standing on the earth's surface you're feeling one atmosphere of pressure. The atmosphere above you is about 50km high. If you were going to go 5000km to the centre of the Earth you'd therefore have 100 times the greater amount of atmospheric pressure on you so the pressure just from the atmosphere would be so huge that just trying to move through that kind of gas would be like running into a brick wall. Very tricky indeed.

Disappearing monitors - the power of polarisation

Why do we stick to ice?

Chris Smith answered this question...

Chris - I've had a nasty experience because I used to work in a lab where we had lots of -70 (degrees Centigrade) freezers. We used to develop various gels and things in those -70 freezers. If you weren't careful and didn't put some gloves on when you went into the freezer and grabbed your developer out, then your skin could freeze onto the rack. When you removed your fingers and let go then it left a lovely fingerprint on the thing. That quite literally was a fingerprint, because it left the surface layers of skin from your finger!

The reason ice is sticky is for that very reason. Ice itself is so cold, if you touch it with skin - because your body secretes tiny amounts of liquid, sweat, which is a salty fluid onto your skin surface - it actually makes your skin stickier. This is why we have it. It's for grip. If you then touch that onto a very cold ice surface the ice then re-freezes the liquid on your finger.

Because that liquid is a fluid and it has got into all the nooks and crannies on your finger, it then freezes solid and will form a very tight bond between your finger and the frozen surface, the ice. You get stuck to the surface.

If it's an ice cube - if it's okay because there's enough heat flowing through your fingers (usually to re-melt that transient freezing) - then you can detach yourself. In the case of a -70 freezer or even colder, people in the Antarctic have to be very careful about this kind of thing - it doesn't warm up enough and you can end up permanently frozen to the surface or you can do quite a bad injury.

So that's why ice is sticky. You get literally frozen to the spot!

How does Blu-tack work?

Chris - I think there's two aspects to this. One is in the same way as the water gets in to all of the nooks and crannies both in the skin and the surface that you touch - in the same way as when you lick your finger to turn a page - you create more friction between your finger and the surface. The water forms an attachment on that surface. Blu-tack is plastic. In other words it can deform plastically. It gets into the nooks and crannies of the surface you're sticking it to and the surface it's already stuck to which makes it sticky.

The other point is that when you press it onto a hard surface the Blu-tack forms a very smooth, flat surface against that hard surface. This excludes air. In order to get the Blu-tack back off the surface you've effectively got to break a vacuum. The atmosphere is helping to hold the Blu-tack stuck onto the surface.

That's why I think it's sticky.

Ben - That's the same reason why, if you get two sheets of glass and layer water between them and press them together to exclude the air it's really hard to peel apart even though water isn't sticky at all.

Chris - Two microscope slides are almost impossible to separate if you put a drop of water and then put the two glasses together. You've got to twist them off each other and you can't pull them apart because the atmosphere is squeezing down on you. Every square metre is feeling a force of ten tonnes: the weight of a London bus. On our bodies every square metre of our body's got ten tonnes of atmospheric pressure on it.

23:46 - The Future of Computing

The Future of Computing

with Chris Bishop

Ben - It's hard to think of any aspect of our lives that isn't dominated by computers, and that shows little sign of stopping. Joining us now is Professor Chris Bishop, from Microsoft Research, to tell us about one way that computers may change in the future - they might become more intelligent. But first, just what do we mean by Computer Science?

Ben - It's hard to think of any aspect of our lives that isn't dominated by computers, and that shows little sign of stopping. Joining us now is Professor Chris Bishop, from Microsoft Research, to tell us about one way that computers may change in the future - they might become more intelligent. But first, just what do we mean by Computer Science?

Chris B - What I mean by computer science is really the set of underlying ideas; the mathematics of concepts that makes digital technology possible. I think it's also worth saying what computer science isn't. To my mind, computer science is not really about how to use computers. Something that we teach our children in school is ICT skills. We teach them how to use computers, how to use software. That's a very important set of skills. That itself is not computer science. It's not the top level, normal use of computers. The next level down is really how to modify computers, how to get them to do new things. That's really about programming and software development. That's something which I think would be great if we taught even more of that in schools. We've barely scratched the surface of that in school curriculum. It would be lovely to teach more of them. Underneath that again is this sort of third level, the foundation of the subject. That's computer science. That's the set of mathematics, the concepts that make it all possible. While computer technology evolves incredibly rapidly and next year you've a whole new set of gadgets and the year after it's another set of gadgets again. The concepts of computer science are much more fundamental. Some of them have been around for half a century and they evolve much more slowly. I think it would be just wonderful if we could show children: in particular a lot more of these basic long-term concepts.

Ben - It's really an understanding of the algorithms, the maths that lie at the very heart - completely regardless of the technology and what you're then doing with it. You need to understand how the numbers work in order to build up these layers that eventually get to a word processor or a shoot 'em up.

Chris B - That's exactly right. A famous computer scientist called Dijkstra once said that, "Computer science is no more about computers than astronomy is about telescopes." The ideas of computer science apply whether you're talking about silicon or whether you've built your computers out of gear wheels or whether you've built it out of DNA or chemistry in some way. These are very general concepts about the limits of computation and the capabilities of computation in the general sense.

Ben - As we've said you're the Chief Research Scientist at Microsoft Research. What is it that you do there? We think of Microsoft as being the people that make Windows that a lot of our computers run on. My own laptop runs on Windows. What do you do?

Chris B - Microsoft research which is about 1% of the whole in terms of people is the basic research components of Microsoft. You can think of us as, in many ways, functioning like academics. Our researchers have a complete freedom to choose the research they do, to publish it where and when they choose and to collaborate fully with academics and so on. At the same time they may also develop ideas that might have commercial relevance. Some of those ideas might be fed into the rest of Microsoft. Now, in Cambridge (which is the European Lab of Microsoft Research) we have about 120 scientists covering a very broad range of fields from many of the mainstream areas of computer science like security and systems and networking and programming language theory and so on. We're increasingly diverse. We have people in machine learning like myself. We have people in vision researchers, even ecologists and biologists these days. One of my main roles as chief research scientist is to promote cross-disciplinary interaction. There's a big danger in this very heterogeneous environment that everybody stays in their own little stove pipe and they don't really talk to each other. I try to find mechanisms to mix people up and get them to understand the ideas and problems in each other's fields. I hope that will promote interaction between the different disciplines because I'm a great believer that a lot of the really new ideas actually emerge at the intersection of different disciplines. Genuinely new ideas in science are actually quite rare but very hard to find. It's slightly less hard to take a good idea from someone else's field and import it into your own field where it may be quite novel and have quite an impact.

Ben - We had quite a good example of this on the show a few weeks ago with Alyssa Goodman who was using the software that's been developed for understanding and visualising MRI scans to look at deep space. It really is true this sort of multi-disciplinarianism is the way science is moving. Going back to something you said you were working on yourself: machine learning. The idea of intelligent machines has been around for a while but what do we really mean? How can we get a machine to learn?

Chris B - The idea of intelligence machines goes right back to the dawn of computing and certainly Alan Turing, the famous Cambridge mathematician who was one of the founders of modern computer science, was very interested in the idea of building intelligent machines. It's obviously enormously difficult problem. Technology in many respects has evolved incredibly quickly. You now have machines that are extremely good at multiplying numbers together, far better than humans. There are other tasks that people and animals are very good at that we tend to characterise as intelligence. Things such as visual recognition: simply looking at a visual scene and understanding what's going on in different objects, understanding what's happening has proved to be immensely difficult to build those capabilities into a computer. Over the years we've been making good progress. There's a long way to go before we have a machine that has genuine intelligence. In fact, although perhaps 30 years ago people sought to build machines that would be intelligent in a high-level sense (machines you could have a conversation with about philosophy or culture) we've sort of abandoned that. It's really just way too difficult. We've taken a bottom-up approach, more of a signal-processing approach. We say let's look at something of a simpler task that machines are not yet good at, let's say people aren't good at, and an example I gave already was recognising objects in the visual world. It's an immensely difficult problem. It appears to be trivial. I can turn around. I can say: well look there's a glass, there's a table, there's a chair, there's a microphone in front of me and so on. I can recognise these objects. Actually what your eye sees is a pattern of light and dark and colour but is miniscule-y variable. No two chairs look the same, the pattern of light in the retina varies as the chair is moved closer or farther away or rotated as light is scattered off other objects. It changes the colour of the light, changes the colour of the chair as this huge variability. Our brain, in think in some way we don't understand yet, mysteriously takes away all that variability and says, no that's still a chair. If we could build a similar capability into machines it would be very powerful technologically. It's an outstanding problem that we're just beginning to make good progress with. It's one of the fast-moving frontiers of machine intelligence at the moment.

Ben - It sounds exciting. It is true that we see a chair but there are so many different varieties that if we had to programme this into a computer it would be an enormous database of things they need to look at. That was Edinburgh University's Professor Chris Bishop. So in the future our computers may more intelligent, but that probably wouldn't stop people getting distracted by games of minesweeper!

31:00 - The Underbelly of Second Life

The Underbelly of Second Life

with Mike Hobbs

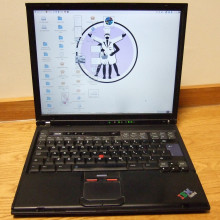

Chris - As you may have noticed, here at the Naked Scientists we're fans of Second Life. It's an interesting way to meet people from all over the world and do things like listen to great radio shows too. But the virtual world needs a great deal of technology and artificial intelligence to keep it going, as Meera Senthilingam recently found out when she met Mike Hobbs at a special evening which was held by Anglia Ruskin University as part of the Cambridge Science Festival.

Mike - Second Life is a lot of things to a lot of people. Essentially it's a 3D virtual world that allows users to create images, sounds and activities within an environment that supports this. They key thing that most people end up doing is communicating with other people. It's a way of mediating communication.

Mike - Second Life is a lot of things to a lot of people. Essentially it's a 3D virtual world that allows users to create images, sounds and activities within an environment that supports this. They key thing that most people end up doing is communicating with other people. It's a way of mediating communication.

Meera - Obviously a lot of complicated technology is involved creating Second Life. Would you be able to summarise just the amount of technology involved in creating such a virtual world.

Mike - The basic unit of Second Life would be a sim or a simulation which is a square area represented on a map. That is essentially produced by a core on a chip. Computer chips these days can have dual cores or quad cores. Many of these chips go together to make up entire racks of computing and so you can find we have tens of thousands of individual areas all stuck together. Each one of those is supported by a core on a chip on a board, in a computer, in a database in a service engine. Everything that you see and everything that you do is bits and bytes somewhere. It's entries in a large number of databases that recall where you've been, what you're doing, what you're trying to see at the moment and what someone else is seeing you do as well. It is quite accurate on your view of the world and someone else's view of the world is kept in sync: quite a technological feat!

Meera - When Second Life first came about it was thought it might just dominate the world. Do you think this is still going to happen with it?

Mike - No, I think Second Life is a part of the internet and has a particular technological base and technological limitations that it actually takes quite a lot more effort to generate these things than a lot of people may first assume. I suspect the air conditioning unit for the servers is taking up as much power as a small village or town. If you scale that up you see there are going to be fundamental limits.

Meera - Your background is in artificial intelligence. To what extent do you think artificial intelligence is being used in Second Life and to what extent could it be used in Second Life?

Mike - It's been used quite a lot because of the fairly powerful level of scripting. There's a language in Second Life that allows you to get objects and avatars to react in certain ways so you can programme interactions which is different to a lot of game environment which are more limited.

Meera - This evening you showed us the possibility for avatars to own dogs and this uses artificial intelligence, doesn't it?

Mike - Yes, the dogs have quite a sophisticated level of artificial intelligence. They have a basic set of actions. The user doesn't see the programming languages around them. They interact with them by asking them to do things like beg/howl and they can also add these actions together to get dogs to do more complicated sequences of actions. The dogs also have a random behaviour mode where they will do something out of their repertoire. If you praise them and tell them they're a good dog it will be more likely to use that particular action.

Meera - These dogs are actually being conditioned in the same way a real dog would.

Mike - Yes, they're being trained. In fact there are dog training classes or more accurately owner training classes. The dogs don't need training. It's the owners that need training on how to interact with the dog.

Meera - If it's possible for people to have dogs what else do you think is possible?

Mike - Certainly other animals, I know there's a big equestrian sector and there are more mundane activities such as vending machines and chat bots that will interact with you and say good morning greeting bots, which can be really annoying. They come up and say, "Hello, how are you - here's a card, read this, do that - can I take you on a tour," and that sort of thing. There are little bits of animated activity that give the impression of an artificial intelligence.

Meera - What would you say the benefits and advantages of using Second Life are?

Mike - Well, they're multiple. On a personal level I think it's a great way of interacting and meeting people because it's very easy to fall into chatting with people. I was wondering around an art exhibition and saw this annoying person actually dressed as a witch flying around the room on a broom. It turned out to be the artist and we had a nice conversation about how inspiration was created and I got a much greater insight than I would do just by looking at the static art. There's the communication side of things and the exploration side. There used to be a representation of Paris. There's certainly a representation of Krakow in Poland and science exhibitions and the National Physical Laboratory as interesting stuff going on.

Meera - Finally, where do you think Second Life is going next? In 5 years what do you think Second Life is going to be like?

Mike - To a certain extent Second Life is ahead of the curve. I think more things will be more like Second Life. I think there are some fundamental limitations as to how big it's going to get. I think the richness and quality of what's going on in it will improve.

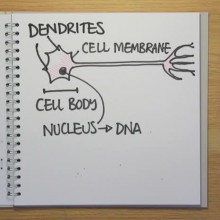

37:48 - The Human CPU: from Computer Science to Neuroscience.

The Human CPU: from Computer Science to Neuroscience.

with Demis Hassabis

Chris - We're joined by Demis Hassabis, who traded in a career designing and programming computer games such as Theme Park to become a neuroscientist! Tell us your story. You started off as a computer science student...

Demis - That's right. So my undergrad degree was in Computer Science at Cambridge and I was having a traditional root in computer science then. When I left I set up my own computer games company which I'd also programmed some games like Theme Park before coming up to Cambridge. It was a natural progression for me to do that. I set up my own company in Camden in London and we grew it up to 65-70 people and created several games for big publishers around the world. And then about 3-4 years ago I decided that the games industry was going in a slightly different direction to the way I thought it would do when I first got into games in the early nineties. It was becoming a more big business, big budget sort of Hollywood-type industry where there was less and less room for creativity, I was feeling.

Chris - Do you think the financiers noticed the scale of this market? Previously it was a bit geeky, bit niche, not a very big market - still cost quite a bit. Because obviously, you're saying big teams of people were needed to do this but the profits weren't huge so no one was that interested. Suddenly people realised you can tether a computer game to a Hollywood blockbuster, you can make a fortune.

Demis - Yeah, I think partly the realisation how big a business it was but I think the main problem and the reason that caused the conservatism, if you like, in terms of creative sense was that games became very expensive to make. The graphics got so sophisticated you needed teams of - the TripleA game now would have easily 50 artists on it. The costs of that are just huge. Because it is costing 10 million pounds for one game the money men at these publishers would need to be more sure there was definitely going to be a return. This means linking it in with a Hollywood franchise.

Chris - That obviously was inconsistently compatible with your view of what you wanted to do. So you did the rather unusual thing of take a side-step into neuroscience.

Demis - Yeah, that's right. Although I had been in the games industry for a long while, underlying all that all the games I'd been involved with designing and programming actually composed a lot of AI in those games. Most of the games were big strategy

Chris - Artificial Intelligence for the non-initiated like me.

Demis - That's right. All the games I wrote like Theme Park and Republic and Evil Genius. They all involved simulations. Most of the involved hundred of little computer people coming in and getting involved with the game environment. Most of the games, like Theme Park, involved you manipulating that environment and seeing how these autonomous agents reacted to what you were doing. Those were the kinds of games I found fun to play and they were the kinds of games I found fun to create. But so underlying all of this was my passion. My main passion is actually in artificial intelligence and related to that (as soon as you start thinking about what artificial intelligence is) then you start thinking about - how is it the mind achieves these end-goals?

Chris - You didn't get hooked on your own games, did you?

Demis - No. Actually after you've worked on a game for 3-4 years you're sick of the sight of it in general, even if it's the best game ever!

Chris - It used to take me about ten minutes to get bored of them. Some of the strategy games I think are terrific because they forced you to think in a certain way. I was a very big fan of text games in the early days. Largely computers were rubbish and the only thing they could do was to generate spurious streams of text that you could read laboriously. They taught me amazing language skills. I think it's partly responsible for why I have an incredible memory for text and words and facts. You had to remember effectively a graphical representation in your head of the text environment that was this computer adventure. I think that probably had a huge brain training effect on me.

Demis - Absolutely. I think those early games left a lot more to your imagination in the way a book would, a great book. It exercises you imagination which, of course, with all the latest flashy graphics although it looks very beautiful obviously leaves less need for visualisation and creative powers.

Chris - Now just very briefly, how did you develop an interest in neuroscience? How did you then take those skills that you had in computer programming to start answering important questions about how the brain works?

Demis - I didn't really know much about neuroscience before I did my PhD but I did a lot of reading and it struck me that a computational approach or an understanding of algorithms or the basic computer science that Professor Bishop was talking about is actually a useful approach maybe to take looking at how the brain works. In general most of the people work with and most people are from a life sciences or medical background which, of course, is incredibly useful form an anatomical point of view. There are actually relatively few people in the neurosciences field that look at the brain in a computational way, a machine-like way which can give other insights. It's not a machine, the brain, but a lot of the things it does are relatively machine-like.

Chris - And the problem you solved most recently in a nutshell, what was that?

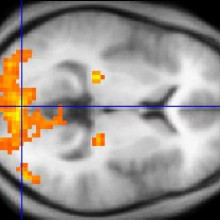

Demis - My most recent study involved trying to accurately predict where someone was standing in a virtual reality room whilst they were lying in a scanner, just from their brain scans.

Chris - Why is that so difficult a problem to solve? I thought there were cells in the brain that fire off when you go into a certain place. We can just read what that activity is, can't we?

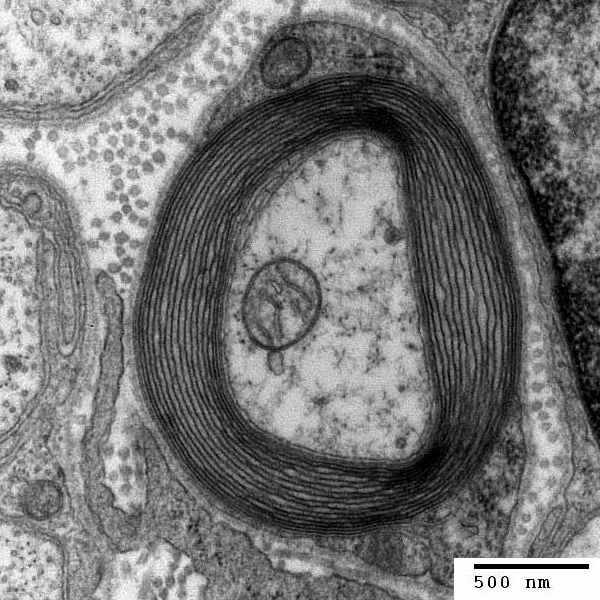

Demis - it's been known for a good 30 years that these experiments were done in rats. There are cells that tell you where a rat is positioned in an environment or a pen. No one really knew what those cells looked like on a population level. If you were to look at a million of those cells at once, which you can't do with a single cell recording from electrodes in rats' brains. What I wondered was if you could have this global eagle's eye view of the whole population of cells that might tell you something different that you couldn't see on the individual cell level.

Chris - Is this supposed to be a model or a representation of what's going on in the real world? Are you trying to understand how, because the person you worked with, Eleanor Maguire very famously worked out how our cab drivers have bigger bits of their brains through navigating round London. Are you trying to basically understand how a cab driver finds his way around London?

Demis - We're trying to understand those findings, really. We know the hippocampus is vital for spatial navigation and spatial memories but we still don't know what it is about the environment that is encoded in those memories and is encoded by those neurons and how and why it's doing that. You can't carry a two tonne brain scanner round with you. The best thing you can do is to record people whilst they're navigating round a virtual, very realistic virtual reality environment.

Chris - When you do this, what did you see and how does this influence our understanding of how the brain tells us how to get from A to B?

Demis - Brain scans work at quite a low resolution compared to single cell recording. When you look at one pixel, if you like, of a brain scan which is called a voxel - a 3D pixel. That contains about 40,000 neurons. For our pattern recognition algorithms you need to classify which location that was related to. It needs several hundred voxels to be active to train a pattern recognition algorithm with. What that shows is the spatial memories must be represented by very large neural populations' codes. Including probably between two and five million neurons.

Chris - Where previously we thought it was just the odd cell that fired off. Now you're saying there's huge populations of cells that join together. They code specific locations.

Demis - That's right. Also it says something about the way that they're clustered and structured. We found that these cells were also clustered quite closely together whereas previous rat literature suggested that perhaps they were random and uniformly distributed across the hippocampus.

45:21 - Chameleon skin foods?

Chameleon skin foods?

We put this to Dr Stephen Juan, Ashley Montagu Fellow for Public Understanding of Human Sciences at the University of Sydney.

There are substances that can turn your skin different colours. One of the most famous ones is carotenemia which is when you eat too many carrots. Your skin can turn yellowish or orange. It's a benign condition, doesn't seem to be related to anything but if you eat too many carrots the beta carotene builds up in your system and you turn into the colour of a carrot: first a little yellow and then a little orange. There was an interesting study in 2006 in Paediatric Dermatology by Royal Liverpool Hospital that showed that carotenemia can come from eating green beans as well. There are other vegetables as well like yams it's been known to happen where the colour can change and some other fruits as well. Yes, you have to be very careful. Of course it's going to show up in lighter skinned people first but it'll happen to anybody. By the way, speaking of carrots the old question is what about carrots improving eyesight? Yes, carrots do improve the eyesight a little bit but only if you are vitamin A deficient to begin with.

How do CDs, DVDs and hard discs store information?

We put this to Chris Bishop, Microsoft Research

That's a good question. CDs and DVDs work in slightly different ways but they have something in common, which is that like all computer storage systems they use binary. All the information's expressed in terms of strings of 1s and 0s or 'on' and 'off.' In a hard disc that's represented magnetically. Each bit is represented by a tiny magnet built into the surface of the hard disc and if it's facing north-up it's a 1 and if it's south-up it's a 0. The head that reads this information can also flip the magnets and so it can write information to the disc. The CD is rather similar. It also has these 1s and 0s but they're represented rather differently. They're represented by little pits. When a disc is written a laser burns little pits into the surface of the disc and another laser can read back those points. If there's a pit there might be a 0 and if there's no pit it might be a 1.

Can you build a mind-control helmet?

We put this to Demis Hassabis of the Institute of Cognitive Neuroscience

Demis - Well at the moment we're very far away from that kind of technology. So far All the technology that is being used in neuroscience at the moment is passively reading the electrical activity the brain is creating itself, rather than actually influencing it the other way. I do know of some rather experimental studies that have been done trying to connect chip board to rat brains and trying to control the way they navigate. As far as I know those are very experimental at the moment. Nothing's really practical that's come out of that yet. Chris - I believe there was a researcher in Europe who showed if you supply or apply an intense magnetic field to the head while they are learning things it helps them remember them better. He was doing this with word learning. He was doing this with students to sleep with a magnetic field round their head. I think it improved their ability to learn, probably because the brain's an electromagnetic organ.

Demis - There may be ways. You could do it neuro-chemically as well with some drugs. There are probably ways of changing and up-regulating entire systems in the brain but that's very different from influencing a specific thought or getting them to specifically think about a key episode, as well.

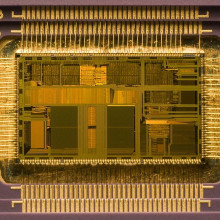

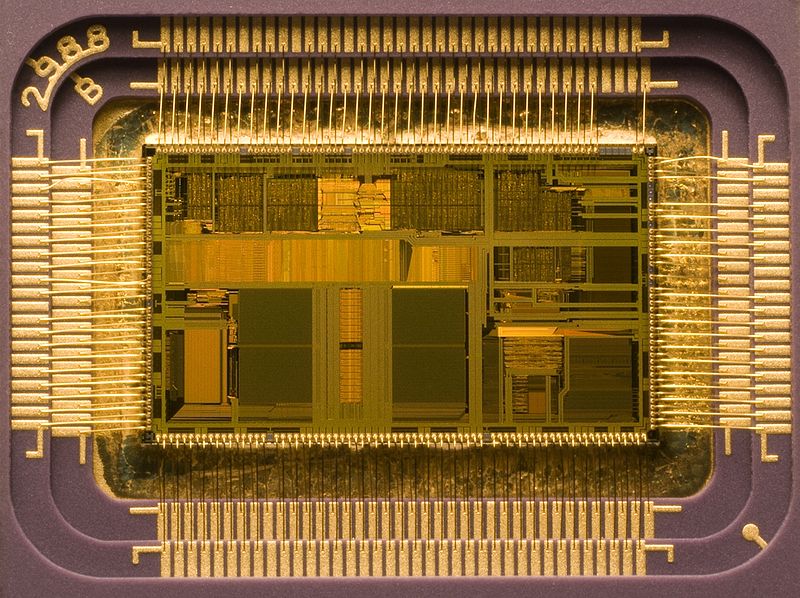

How will faster computer processors be made in the future?

We put this to Chris Bishop from Microsoft Research

Computer processors have been doubling in speed every two years for the last fifty years or so and it's been driven by something called Moore's Law which says the number of transistors on a processor keeps doubling every two years. As the transistors have got smaller and smaller we've fitted more on the chip. We've started to run into some problems. One of the problems we're hitting already is to do with the heat density that's produced by all these transistors in a tiny space switching on and off. Right now the heat density on a chip is equal to that on a hotplate on a cooker. If we carry on the way we've been going then in ten years' time that heat density will equal that of the surface of the sun. We have to find a new way forward. The main approach that we're adopting is to do what we call parallel computing. Instead of trying to make each individual processor run faster we have several processor'sside-by-side, all working on the problem together. Using that technique of parallel processing we should be able to continue to get processors collectively to run faster and faster and to continue this doubling every two years for a good many years if not decades to come.

- Previous Fish Oil and Frankincense

- Next Chameleon Food

Comments

Add a comment