What the future holds for digital data storage goes under the spotlight this week - how can we ensure that what we record today - on film, discs or up in the cloud - remains readable for years to come? Plus, news of what the Planck probe has revealed about the early Universe, giant squid, an update from the Mars Curiosity mission, eye implants and nanoparticles to track stem cells...

In this episode

01:04 - Pushing forward with Planck

Pushing forward with Planck

An analysis of data collected by the Planck Telescope supports a period of rapid expansion shortly after the big bang.

The Planck telescope was launched in 2009, with a range of scientific aims, including observing the Cosmic Microwave Background Radiation, the "glow" left behind from the big bang.

The Planck telescope was launched in 2009, with a range of scientific aims, including observing the Cosmic Microwave Background Radiation, the "glow" left behind from the big bang.

These latest results mark the most detailed ever study of the CMBR, improving on previous data collected by the Cosmic Background Explorer (COBE) and the Wilkinson Microwave Anisotropy Probe (WMAP) missions.

The CMBR comes from a period when the universe first began to cool, just a few hundred thousand years after the Big Bang. The very early universe was permeated by a hot "soup" of subatomic particles. Only once the first neutral atoms of hydrogen and helium condensed out of the plasma did the universe become transparent enough for photons of light to travel freely. This is the light that we now see as the CMBR.

Tiny temperature variations in this radiation can give clues as to the structure of the early universe, allowing scientists to refine their models. The data released so far support the idea of a period of rapid expansion shortly after the big bang, and offer tantalising hints as to what caused this expansion...

06:17 - Genetics solves Giant Squid Mystery

Genetics solves Giant Squid Mystery

The enigmatic, tentacled beast that sparked the legend of the kraken has revealed its secrets to a team of Danish geneticists.

Dr Tom Gilbert from the Natural History Museum of Denmark and his colleagues have discovered that the entire world population of giant squid are of one single species.

And despite the fact that the squid were found or caught all over the world - from the waters off Japan, to Europe to the US, the painstaking genetic analysis has finally revealed their secret - almost 150 years after the creature was first described.

And despite the fact that the squid were found or caught all over the world - from the waters off Japan, to Europe to the US, the painstaking genetic analysis has finally revealed their secret - almost 150 years after the creature was first described.

Dr Gilbert was able to make the discovery thanks to colleagues around who took samples from the 43 best specimens of giant squid that have been caught. Most specimens have floated to the surface after they died, or washed up on coastlines. And some have even found inside the bellies of beached sperm whales. A few have been filmed and photographed alive in recent years.

The results suggests, Dr Gilbert says, that as they mature, the giant squid simply drift around the world on the ocean currents, before they diving to the deep ocean, which is where the mature squid live and mate.

There is still a huge amount to learn about these sea monsters though. The depths at which they dwell means that no one has ever seen them mate or give birth, how fast they move, and it isn't even clear what they eat.

These new results about the mysterious giant squid are released, fittingly enough, on the 200th anniversary of the Danish naturalist and polymath, Japetus Steenstrup (born in 1813) who was the first to explode the myth of the kraken - the tentacle beast that was said to attack ships - by describing the species for the first time and revealing that the mysterious seamonster of legend was in fact a giant squid.

10:37 - Getting 'Curious' About Mars Data

Getting 'Curious' About Mars Data

The latest data from the Curiosity rover on Mars indicates that the planet's surface could have supported microbial life in the past.

The findings come from chemical analysis of rock drilled from what appears to be a dried up river or lake bed in an area called Yellowknife bay, using x-ray diffraction and gas chromatography.

The analysis showed that the rocks contain clay minerals, particularly ones resulting from the reaction of igneous materials - molten rock pushing up from beneath the planet surface - with water. From the minerals formed, and the presence of calcium sulfate in the sample, scientists can also tell that the water had relatively neutral pH and was not very salty.

The probe also found sulfur compounds in varying oxidation states, as well as some carbon compounds. Finding all of these things together points to an atmosphere in which microbes could have survived. That said, just finding evidence they could have survived doesn't mean there actually were ever any microbes present.

This is the latest data from Curiosity, which has been exploring the surface of Mars since October last year. After testing its instruments, the rover has sent back a steady stream of data about the atmosphere and surface of the Red Planet.

14:09 - Repairing Eyes With Plastic

Repairing Eyes With Plastic

Dave - Scientists from the Italian Institute of Technology in Genoa have managed to stimulate a retina just by using a piece of plastic and some light. So, there are quite a lot of diseases which cause degeneration of your retina, things like retinitis pigmentosa and macular degeneration which are very common especially in elderly people and whereby, your retina can't detect light, so you suddenly go blind.

Chris - So, critically in those conditions, the photoreceptors that converts photons into nerve signals, they go, but the actual retina parts that give our eyes to the optic nerve going back to the brain, they're still there.

Dave - So, the wiring is still there. So, the idea is, if you can somehow put the signal into that wiring, you could still see.

So, what they've been doing is they've been dealing with a series of plastics which when you shine light on them, they can produce voltages. And they've got a sheet of this plastic called p3ht and they've discovered that if you put a piece of retina on the top in a kind of sort of salty liquid electrolyte on the surface, and then you shine a light at the plastic, you get enough voltage produced by the plastic to trigger these cells. And depending on how fast you shine that light, if you shine it at 1 hertz so you're flashing on and off once a second, about 95% of the time when you turn it on, you trigger nerve cells. If you're trying it at 20 hertz, 20 times a second, it gets a bit less good, 65% of the time. So, it's not perfect, but it does show that just with a very, very simple thing, just a sheet of plastic and on top of the retina, you can actually produce signals which should then, if the retina was still attached to the rat, mean the rat could see.

Chris - Does enough light go into the eye to drive this thing, were you to implant this into the eye as a sort of implant?

Dave - So, at the moment, there's enough light getting there. It will be stimulated by full sunlight so you'll be able to see in full daylight. But as soon as you got inside and lower light levels, it's not very good. So, as it is at the moment, it probably wouldn't be ideal solution, but that's something which I'm sure they're looking into developing an increasing sensitivity and increasing the effectiveness of the stimulation.

Phil - What I was going to say, Dave is you said they have to put a sort of salty solution on the top. Is the eventual idea to have that supplied by the fluid inside the eye?

Dave - Yes, so you're essentially filled with salty solutions and so, I think the idea is and what they'll be looking at doing next is basically just taking pieces of plastic, putting it in the eye of a blind rat and seeing whether the rat can then detect light. It has the advantage over other systems where they've actually used complex electronics in that, it's a sheet of plastic. It doesn't need any power. It doesn't produce any heat and this plastic also seems to be fairly biocompatible. They're certainly growing nerve cells on it for 20 days and nerve cells seem perfectly happy. So, I mean obviously, it's a long way to actually use it in humans, but it's looking quite positive.

17:08 - Nanoparticle stem cell ultrasound label

Nanoparticle stem cell ultrasound label

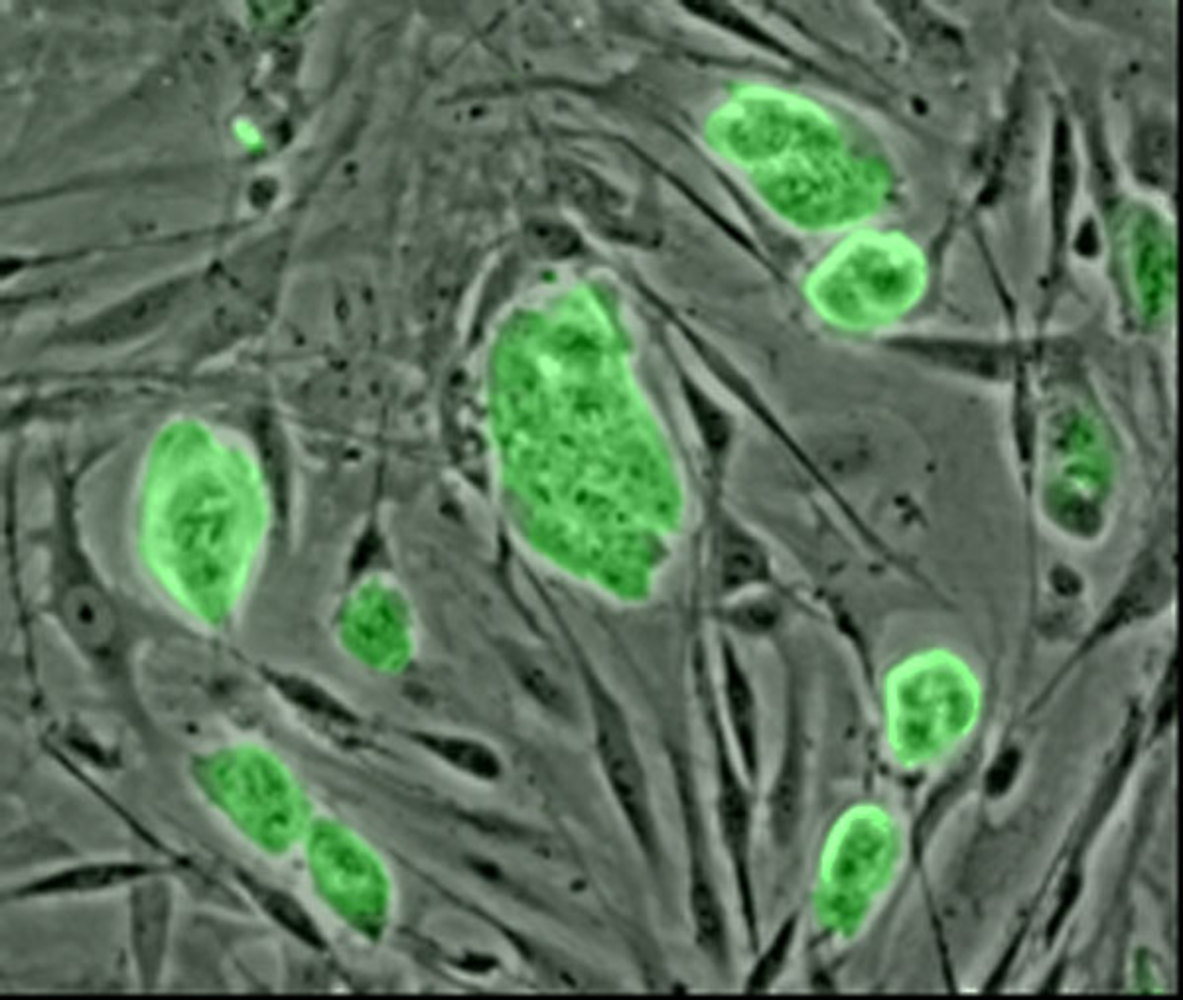

A way to label up stem cells so that they can be seen on ultrasound, MRI and microscope scans has been revealed by scientists in  the US.

the US.

As Sanjiv Gambhir and his colleagues point out in their paper in Science Translational Medicine, the promises of stem cell transplantation therapies for cardiac disease have yet to be realised, partly owing to the inability of doctors to identify appropriate areas for implantation and then follow up the outcomes for those cells.

To tackle this problem, Stanford University-based Gambhir and his colleagues have developed silica nanoparticles that are taken up by stem cells which subsequently become sustainably visible on ultrasound or MRI scans and will even glow up in ultraviolet light when specimens are imaged under a microscope.

So far they've tested the particles in the dish and in mice, but point out that they should be safe and well-tolerated in humans too.

As few as 70,000 cells labelled with the particles will show up and can be quantified under ultrasound, meaning that stem cells therapies can be objectively assessed to determine which clinical protocols, if any, offer the bets outcomes for patients.

19:56 - Wide Angle 3D Screens

Wide Angle 3D Screens

with David Fattal, HP Labs

3D screens in TVs and portable game consoles like Nintendo 3DSs have been around for quite a while, but they are limited because you have to watch them from a fixed position. But, if you don't want to sit around with 3D glasses on, your options are really quite limited. Now, a team from HP's research labs think that they might have solved the problem. Chris Smith and Dave Ansell were joined by one of the team, David Fattal, to find out more

Dave - So David, how do current 3D displays work?

David - There's a whole set of solutions for 3D display out there, already in the commercial markets and the most common type is the so-called lenticular array. So, imagine you have just a normal TV and you put a sheet of optical lenses in front of it. So, optical lens is pretty much the same thing that you put in your contact lens that work to refocus light. And what these lenses are able to do is that they're actually sending light from different pixels on your normal TV into different directions of space. So, they create a bunch of direction of light rays and when a viewer actually looks at the 3D display, a different image is going to come to his or her right and left eye, and therefore, they're going to perceive these so-called 3D stereoscopic effects. So the brain sees slightly different images and re-constructs the depth information about the image.

Chris - Because the key thing is that you've got to send a different picture to each of the two eyes for the brain to then recombine those and create the 3-dimensional effect, haven't you?

David - Yes, so this is exactly correct. People have been traditionally trying to  do that using glasses. So glasses artificially block one image or another, in front of your right or left eye. But the trick here is to try to do this without glasses so that your display might be able to send different images in different region of space to reach each one of your eyes.

do that using glasses. So glasses artificially block one image or another, in front of your right or left eye. But the trick here is to try to do this without glasses so that your display might be able to send different images in different region of space to reach each one of your eyes.

Chris - Now of course, the problem with doing this is that the computer or the display doesn't know where your head is and therefore, doesn't know which bit to send to which eye. So, how have you got around that problem?

David - Yeah, this is a great question. So eventually, you're right. The display doesn't know where you're located and so we take the brute force approach where the display actually sends all possible perspectives, all possible images of the 3D objects simultaneously in parallel in space. So that any viewer, not only one, but you can have 10 viewers at different position around the display and each one of the viewers would have a different imagery to right or left eye, so they would all be able to see simultaneously in 3D. so again, a brute force approach just send all the images at once.

Dave - I guess that has the advantage also that if you move your head, you'll see around the object in the TV as well.

David - Exactly. So, this is one of the really important points about our technology is that, you can actually move around objects and very much like you could move around the hologram of Princess Leia in Star Wars that people like to reference.

Chris - David, can you talk us through then, how you've achieved this clever trick? So, just take us from the bottom up of a normal screen with its pixels and things. How do you get the effect you're achieving?

David - So our technology is very similar to liquid crystal display technology that is mainstream today in cell phones and in your laptop screen, and the way these displays work is they have two parts. The first part is called the backlight and the role of the backlight is so you basically illuminate from the sides with a bunch of lamps, they're called LEDs. And so, the light propagates from the side of your backlight which is a big piece of glass or plastic, and as it propagates from the edge to the centre, it encounters a bunch of so called scatterers so imagine a bunch of little bumps on the surface of the backlight. And when light encounters such a bump, it actually scattered from inside the backlight into the viewing zone of the display outside. So, this scenario is a constant flow of light and in order to form an image, you need a so-called modulator and this is usually done using a liquid crystal front display which is imaging a bunch of little cells that can be controlled from completely transparent to completely opaque by just applying a certain voltage.

Chris - So, how do you then get the light so that it's only going in a certain direction. So in other words, if you were looking at it straight at the screen, one eye is going to get information from one pixel area and another eye is going to get a different one. How do you control the direction of the light?

David - Yeah and this is where our invention comes from. We replace the little bumps I talked about that are present in traditional displays, we'll replace them by nano structure which are called diffraction gratings which are objects with features that are smaller than the wavelength and when light hits each of these objects, or these diffraction grating, it is scattered in a very directional manner. So, it forms a light ray and in one particular direction. And then by changing the exact parameters of this nano structure, we are able to control the direction at will. So, we can create any light ray, we can send an image in any direction we want and in parallel.

Chris - So, can you change the direction that that grid is sending light in or is it fixed?

David - The directions are fixed, so they're set once and for all, and then the external modulator, your liquid crystal modulator is able to change the intensity of these light rays.

Chris - So basically, the computer is working out when to turn the light on or off and it's directing light out of that particular pixel in a certain direction to effectively, an eye or the other eye. And in that way, you can get the two eyes seeing different amounts of light at the same time and that enables the brain to be fooled into thinking it's seeing 3D.

David - Yeah, absolutely and it can do so regardless of the position. And you could even be traveling around the display and you would see a continuous update of the perspective so you would seem like you actually are perceiving a continuous motion of the object in 3D.

Chris - So, how long until I can buy a fancy smartphone and see pictures of my children in 3 dimensions on the screen?

David - You know, as a researcher, I'm not really able to comment on this kind of commercial prediction, but certainly, as a 3D display lover, I would want to see it as soon as possible. So we're just going to work hard so that within a couple of years, we can see the first application one way or another.

26:55 - Tidal Power - Planet Earth Online

Tidal Power - Planet Earth Online

with Judith Wolf and Nick Yates from the National Oceanography Centre

As an island nation, the UK's coast and rivers have played an important role in trade and transport throughout our history. But, with concerns about the limited lifetime of fossil fuels, can these watery surroundings play a future role producing tidal energy?

Planet Earth podcast presenter Richard Hollingham met Judith Wolf and Nick Yates, from the National Oceanography Centre in Liverpool, on a foggy day by Liverpool's Albert Dock. Judith began by outlining the potential energy contained by the River Mersey.

Judith Wolf: If we consider Liverpool itself we have a ten metre tidal range at spring. That is one of the highest in the UK, almost the highest in the world. That energy can be captured in various ways by running a barrage with turbines. The other kind of energy is the kinetic energy of the flow of the tide and in straights and estuary mouths like here we can get really large flows which could be several knots of water speed and that energy can also be harnessed.

Richard Hollingham: Ten metres!

Judith Wolf: That's between low tide and high tide, yes, on the maximum spring tidal range.

Richard Hollingham: So, potentially, a phenomenal amount of energy there?

Judith Wolf: That's right, yes. The amount of energy you can get from a tide in an estuary is related to the area that's behind the mouth of the estuary, the area inside the estuary and the tide range at the mouth.

Richard Hollingham: Now, Nick, you were an engineer, you've been studying the potential of tides around the UK. What did you actually do?

Nick Yates: The study in question was a computer simulation that took a computer model and into that model we can see the tidal wave coming in and then into the model we're able to simulate barrages, in particular, across estuaries because as Judith said the energy you get is proportional to the area and also the tidal range, and so the ideal place for a barrage to exploit tidal range, which is a physical structure which allows you to delay tidal motions and get a water level difference like conventional hydro power, and actually behind you - you can't see the other side - but the Mersey you've got geography helping you, it's only a kilometre across. So for a relatively short physical structure you can then place turbines in that and get relatively a large amount of energy. So, just to give you an example for the Mersey, that would be something like a terawatt hour, which is a difficult number to get your head around, but you're probably talking somewhere between half a million and a million houses electricity from that one structure.

Richard Hollingham: So how much energy could you generate in the UK from the tide?

Nick Yates: We think it's going to be at least 20% and 15% from tidal range with barrages over the major estuaries plus 5% from tidal stream which is from the fast motion of currents.

Richard Hollingham: And what sort of structures? Like a conventional hydro-electric plant with turbines? We see these wave generators with almost like bobbing buoys going up and down.

Nick Yates: Yeah, the structure for tidal range - if you like it's like a harbour actually, it's very similar technology. So you would have rock and then you would have probably concrete caissons where you have got turbines and sluice gates.

Richard Hollingham: And what about the environmental consequences of putting sometime like that on an estuary like this one here?

Judith Wolf: Well some of the environmental concerns are very much about inter-tidal habitats in estuaries. Estuaries are very productive areas and are very important for migratory birds and fish, particularly some of the mud waders and feeding birds on the estuary. Many people are concerned that the habitats that they exploit will disappear. One of the things we did in an earlier study was actually to estimate how we could best minimise that impact. If you run the barrage on ebb and flood generation you can actually modify the amount of habitat that is lost and minimise the amount that is lost.

Richard Hollingham: Now you looked at this across the UK, so different sites. Presumably some are more suitable than others?

Judith Wolf: Yes that is true. I mean for a tidal barrage we're looking for the maximum tidal range and the Mersey is particularly nice because it has a very narrow mouth and therefore you would build the minimum length of dam across it in order to capture a moderately large amount of energy, so the energy would be relatively cheap here.

Richard Hollingham: And so the dam wouldn't have to go right away across the mouth, it would just go across a small amount.

Judith Wolf: It would have to close off the estuary completely but the tide would still flow through the dam, through the sluices and turbines.

Richard Hollingham: And how does this compare say, Nick, with wind power and other forms of alternative energy generation?

Nick Yates: Well, the key thing with tidal is the renewable energy you can set your watch by, and so that predictability in particular is extremely important. It's funny I was just reading recently from Ofgem some figures about the variability of wind which went from nine megawatts to three gigawatts within the space of three days, but that's not to say that it's an either or. I actually think we're going to need all of them particularly to replace what we get from fossil fuels but also if we move to electric vehicles demand is going to go up.

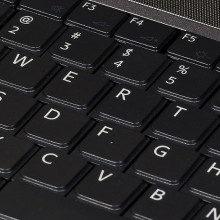

32:01 - The Problems With Digital Data Storage

The Problems With Digital Data Storage

with Leo Enticknap, University of Leeds

The odd floppy discs and even mini discs that are lying around your office might tell you all you need to know about how quickly new storage formats can become obsolete. But this problem doesn't just affect consumers. It also presents a big problem for archivists who are trying to store digital material into the future. According to Dr. Leo Enticknap who's a lecturer in cinema from University of Leeds, this is certainly a problem in the archiving of movies and television programmes as he explained to Dominic Ford.

Dominic - Now, we're used to hearing about classic episodes from the 1960s being burnt because there simply wasn't storage for the film with those programmes on. Surely, with the advent of modern hard discs and DVDs, it's much easier to store vast quantities of video information.

Leo - In the short term, yes, but I'm afraid there always is a 'but' whenever we're talking about digital media. Firstly, you have a problem with chemical decomposition of the discs themselves. For example, in pre-recorded DVDs, the ones that are pressed in a factory, you can get into problems with the chemicals used to form the dye for the label on top, attacking the substrate of the disc for example and causing problems. And with consumer recorded DVDs, the DVDRs for example, the recording medium is a dye. And that dye is very slightly changed in its light reflectivity characteristics by being burned by a laser. The problem is, that that reaction that's started by the laser being burned can't be stopped completely. It can only be slowed down almost to the point of being stopped. So, that dye layer is continuing to change very, very slowly even after it's being recorded and as a result of that, basically, your DVDRs have relatively small shelf lives.

Dominic - So, what timescales are we talking about here? If I'd burn a video onto a DVD, how long will it stay there?

Leo - There are a number of variables but most archivists and librarians would not want to trust one for longer than say, 3 to 5 years and even that stored in optimal conditions.

Dominic - What about hard discs? Surely, those are more durable.

Leo - They're slightly more durable, but again, I'm afraid there's always a gotcha with all of these media. If you think about a hard disc, it's a complicated electromechanical device. You've got two or more, sometimes several glass platters which have the magnetically sensitive coating on them. You've got the head assembly, the platters themselves are on a spindle, which has a bearing and which has lubricant in it, and on top of that, you've got a PCB which contains the control electronics and of course, your interface to the computers.

Think about all the possible things that can go wrong on that. You can have a failure of any one of these moving parts which are incredibly tiny and also, you can have the issue of hardware or software obsolescence. What happens if in 20, 30 years' time, computers quite simply don't have USB sockets on them anymore. In the same way that computers now, simply don't have 5 1/4 inch or 8 inch disc drives in them as they did 30 years ago.

Dominic - And I guess we've touched on the question of software here. Obviously, file formats are forever changing. Does that mean that you're having to continually reprocess this material into newer file formats?

Leo - Really, we're into some quite unknown territory here because so far, we haven't had a major instance of an audio or video file format in widespread use quite simply, dropping out of existence in terms of being supported by the latest generation of playback software, but you'll never know it could happen.

I mean, just to give you a non-audio visual example, if you have documents that were produced using only like version 1 or version 2 of Microsoft word, and you try to open them using Microsoft word 2010, well, it can be done, but you have to get involved in some quite complicated geekery in order to do it, involving downloading plug-ins and things like that. It won't do it straight out of the box. So, even with probably the world's most widespread word processing system, if you're trying to open files that were produced using the version that was in use 15 to 20 years ago, you're going to have a struggle. In 20 years' time, a software manufacturer could be saying to itself, "Well, so few people now are still using mp3 that we're not going to push our production costs up by supporting this one." And yes, you could be in a position whereby, it's going to be very, very difficult to read back that software format.

Dominic - So, this sounds like it's problem not just for the media wanting to keep videos on file, but also for academics who want to keep documents that they were discussing 10, 15 years ago.

Leo - Yes, absolutely. I think archivists are going to have to keep their collections under absolute constant review and look at carrying out migration both in a hardware sense and in a software sense, as and when support issues really do start to emerge.

Dominic - So, what are companies doing to try and solve this problem?

Leo - There is no silver bullet. There is no widely accepted store and ignore format. In short, you've got a problem. And the moment you decide that you want to preserve this digital movie or this sound recording or whatever in the long term, you are making a long term commitment to manage actively the integrity of that digital asset.

Dominic - I guess this affects not only big companies who are making videos professionally, but also, people at home. If you're shooting a video of your wedding for example, what can you do to make sure that future generations of your family will be able to see that?

Leo - You basically just got to do a scaled version of what the professionals do. You need to take ownership of that material and decide that you are going to make a long term commitment to keeping it.

What I do personally for example is that I backup all of my data to a separate portable sets of hard drives every week. One of them then goes into work with me on Monday morning and sits on my desk drawer a week. So that if my flat burns down, then I've got a copy of my data in another physical location. And I'm looking at constantly backing up, constantly keeping an eye on the currency of the file format that they are encoded in.

And to be honest, it's partly because I do worry about my ability to do that, that I still take most of my personal photographs on a Leica M3 film camera that I inherited from my grandfather because I know that with a 35mm film negative, yes, obviously, what I'll do is scan those negatives so I can email the photos to people and that sort of thing. But ultimately, if I suffer a complete data loss disaster, I can always scan those films again if the original is a born digital file and all the copies of that, I have go well then that's the end.

Dominic - That's the technology that's being used for over 100 years, so I guess you can be fairly sure that it will probably still be in use in decades to come. I guess this has implications for how historians will look back on the 21st century. Some people have said that they'll be overwhelmed with the amount of stuff that we record, but you're actually hinting that they will have a problem of not being able to read out records.

Leo - I think there will be that problem as well and furthermore, there's even a phrase for it which is kind of started to do the rounds in the archival community and that is the Digital Dark Age. And it's being speculated that just as we have lost, it is estimated between 2/3 and 3 quarters of the film shot during the first quarter of the 20th century. So, we might end up in a situation whereby, we've got this kind of black hole from say, the first 1 to 3 decades of the digital era where in fact, very little survives.

40:34 - Storing Digital Data Inside Glass

Storing Digital Data Inside Glass

with Eric Mazur, Harvard University

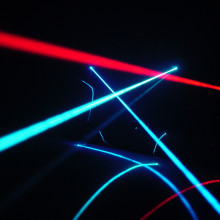

The so called 'digital dark age' that archivists are warning of does mean that the search is on for a store and ignore technology to allow us to preserve digital data into the long term. One team from Harvard University led by Professor Eric Mazur has invented a process that can, they say, "permanently store digital data inside glass"

Dave - So Eric, how does this process actually work?

Eric - It works by taking extremely short, but intense laser pulses and focusing them into the bulk of the glass. Not on the surface, but in the bulk of it and essentially, creating a tiny void that can serve the same role as changing the dye at the surface of a DVD or a CD.

Chris - But if you vaporise the glass on the inside, I don't understand where the glass that you vaporise goes to make the hole.

Eric - Well, if you take any material, it's made up of atoms and atoms themselves are mostly nothing. The nucleus of an atom is tiny, tiny compared to the actual size of the atom. So, in the lattice of any material, glass happens not to have a lattice. It's amorphous, but there's plenty of interstitial, another place to drive additional materials. So basically, what happens is that in that tiny little void that you've created with this super intense laser pulse, the atoms have basically been driven into the surrounding material.

Chris - So, you get a little sort of hole which behaves like you said the pit that you would write into a DVD or a CD. So, do you use the same sort of data encoding then to put the data into these little pits or you're using your own proprietary way of encoding the data?

Eric - No, we would use the standard technique and in fact, we've only demonstrated the technique. We've not gone as far as commercialising it, but you would, I think as a first step simply use the same method that you use at the surface of a commercial CD or DVD. But now, instead of writing just a single layer, or in the case of a multi-layer DVD, maybe 2 or 3, you could write in the thickness of a standard CD or DVD 100 or more layers.

Dave - So, how do you cause it to form this little pits inside the glass at a certain depth and not higher up because surely the lights got to go through some glass to get there?

Eric - Exactly, so the trick is in exploiting this extremely high intensity of light that occurs with pulses that have durations that are measured in what are called femtoseconds and millionth of a billionth of a second. When you make your laser pulse that short, the intensity of the light becomes phenomenal because you're basically focusing the light in time if you wish. If you now also focus it in space using a lens, you essentially put a whole lot of light on top of each other, you basically bunch up the light at the focus of this lens both in space, transverse as well as in time in the longitudinal direction. And as a consequence, the interaction of the light and matter becomes very different from the one we're normally accustomed to.

If you take glass for example, we use glass as windows because it's transparent which means by definition, the light is not interacting with the glass. It goes through it. But when you get to this exceptionally high intensity, and I'm talking an intensity where the electric field in the light - because light is electromagnetic wave - becomes larger than the electric field that binds electrons to atoms. When you get to that regime, you basically change the nature of the interaction between the light and the material completely and you are actually able to rip apart the bonds between the atoms and deposit energy in that tiny little volume. It's tiny because it is completely limited to the volume where you focus the light. So, if I place the focus of my laser beam, not at the surface but inside the glass, I could not do this with a material that's not transparent obviously because then absorbtion would start at the surface. But if I think glass or plastic or any other transparent material, the absorption will only happen in a very tiny volume at the focus of the laser beam.

Chris - Eric, some people say that glass is a bit like a super cooled liquid so it flows over time. Does this mean that the glass could move over time and occlude these tiny holes you've made and it's then your data would disappear?

Eric - That is definitely, true. Nothing lasts forever, right? I mean, we know that. However, there are certainly glass specimens that have lasted over thousand years. We still have glass objects from the Romans that is in very good shape. I think it would extend the lifetime of most recording media by several orders of magnitude and I think a hundred or several hundred years would be probably a safe estimate for a reliable readout of any data stored in this way.

Chris - And I presume that the really good thing here is that because the data is being stored inside the glass rather than on the surface of the glass, like on the surface of a record, it means that surface abrasion or damage does not damage the integrity of the data because you can read through that and see the message still written inside.

Eric - Correct. You could always repolish the outside to get a clean surface again.

Chris - So, how much data can you pack into a certain area or unit area of glass?

Eric - Well, as I said, I mean, you can easily pack in a hundred - in a standard platter, the size of a CD or DVD, you could easily put 100 to 200 layers. So imagine, putting the equivalent of 200 DVDs in a single DVD.

Chris - And if you had much interest from anyone saying that they like to try and use this commercially or is this literally just rooted in the academic world at the moment as an interesting thing you can do?

Eric - No, we certainly have had interest. There are still a number of technological hurdles. One is that the laser that is used to write is not something that is the size of a laser that you would find in a DVD or CD writer. So, this would not easily become a consumer item, but it could become an item for archival data storage. The other one is that writing the data is a lengthy process, given the current state of femtosecond lasers. Although, that is improving dramatically over the past few years and I could very well see that in a few years' time we could actually have a laser that could write 200 DVDs in a relatively short amount of time, meaning, something like minutes rather than hours or days.

The other problem is of course, that right now, magnetic and other type of semiconductor media are so cheap that writing the data in magnetic media and then copying them if you want to preserve them, often is a more cost effective technique than trying to exploit a new and as yet unproven technique.

47:49 - Data Compression

Data Compression

with Christian Steinruecken, University of Cambridge

It's not just new technology that could help us to store digital data in the future. Already, we compress some data so that it takes up a lot less space. To find out how this works, Chris Smith and Dave Ansell were joined by PhD student Christian Steinruecken who is from the Department of Engineering at Cambridge University.

Dave - So Christian, what exactly is data compression?

Christian - Data compression is really the art of communicating using fewer bits that it would usually take.

Dave - Where bits are the 1s and 0s which all digital information is stored in.

Christian - That's right, using fewer units of information to communicate the same message.

Dave - So, how do you reduce the number of bits you're going to use?

Christian - So, the idea is to re-write the message in a different way and that re-writing can be made to exploit properties of the data that include for example, long repetitions or statistical properties in such a way that fewer symbols are needed.

Dave - So, if you have something which goes 101 101 a thousand times, you could say, instead of writing 101 a thousand times, "please write me a 101 a thousand times" which is that one sentence rather than...

Christian - That's one way of doing it.

Dave - Do we use compression a lot at the moment?

Christian - Yes, compression is ubiquitous. Every smartphone has compression algorithms built in and it's something that ships with every operating system at the moment, it's a technique that is vital for certain things to work at all. If you imagine, for example, the idea of a Mars rover on the surface of Mars and you have limited bandwidth to communicate with that rover, you are talking about a very expensive mission. You want to have the ability to get as much data back from Mars as you can. The bandwidth limit is not the camera on rover or the instruments. It's the satellite link. We'd like to compress the data as much as possible so that we can send more data overall.

Dave - So, I guess there's two ways of compressing. You can either attempt to produce exactly the same output or you can just try and get the important parts of the data.

Christian - Yes, both play a role. So, there's a difference between lossy compression and lossless compression. Lossy compression is what's happening in jpeg for example: we can throw away part of the data that we don't so much care about. The other idea (of lossless compression) is to preserve precisely the data that there are, but exploiting statistical properties to make them smaller.

Dave - So, what are you actually looking at in your research?

Christian - In my research, I look at structural compression, which is [based on] the idea that certain mathematical properties of data can be exploited to make them really small. For example, if you have combinatorial objects, multisets or permutations, that sort of thing, these are [examples of] mathematical properties which can be exploited. Most data that we're dealing with are highly structured. For example, the surrounding sequence in a long sequence of text tells us a lot about the bits that we don't yet know. So when communicating a text or a long file, having already seen part of the file helps us to encode the next symbol in a better way. I look at compression algorithms that take advantage of such properties in files, in order to make it easier to transmit larger files and make them smaller.

Dave - So, if you really, really understand what you're sending, you don't actually have to send nearly as much.

Christian - Yeah, the idea is that if we already know which data we're going to send then we don't have to send anything. The problem is making an algorithm that learns very quickly what the data is and gets a good idea of what is going to come, and exploiting those properties that we learn. So, it's really a form of machine learning.

Chris - So, it's not just about a machine where you sort of turn the handle, you put the data in and it comes out in a crunched down way. This is actually about the machine, learning the pattern of the data in order to become better as it goes on at producing a more condensed or compressed output.

Christian - That's right. The problem is that at the time when the Mars rover is sending a file, the technology that's in it to compress doesn't yet know what the data is going to be. So it has to learn as it goes along what the data is, to exploit those properties. At the receiving end, the receiver will learn exactly the same way and as data is decoded, will update its internal model in order to be able to follow along.

Chris - Does one have to send the code to the other so that I've compressed something and learned how I'm doing as I go along? Do I need to send you the code I use so that you know how to unpick what I did and regenerate what I started with?

Christian - The idea is that we both use the same compression programme and when we start out, both compression programmes are in exactly the same state. Now, as I start compressing a file, my compression programme starts learning. It starts learning that certain names appear very often in the text. It may learn all sort of other things. At the time when I decompress the file, the same process repeats. For the copy that it's decompressing, it will also learn gradually as it decompresses what those names are. So, the compressor and the decompressor really have to be in sync. They are learning the same thing separately, on perhaps different planets.

Chris - And is it just on Mars rovers or are there other applications? I mean, we're going to compress the audio for this programme for example and that means we'll throw away some of the frequencies, some of the sounds that we don't think people are going to be able to hear or will not notice if they're gone. So, could you take this similar sort of thing and make even better ways of compressing sound files so that our programmes aren't going to take up as much space in someone's iPod?

Christian - That's right. Whenever we have a better understanding of what properties we care about in the data, we can make a better compressor, and this is partially why there are many different file formats for audio because every now and then there's a small revolution where an incremental change produces a better way of storing information. And so, for audio for example, something that happens in the mp3 format, and in other formats, is that some of the frequency bands are thrown away that humans can't easily hear.

Chris - And that means that then you're saving space overall. You've thrown the data, but you'll never going to get it back though. So that's an example of a lossy compression. Are you saying that your thing can be used in a way that will get back that sound we've thrown away?

Christian - I think it completely depends. Many sensors record things that we don't care about, but when it comes to things like electronic text, we probably want to preserve the exact text without changing it when we decompress it. So, this is a case where we really want lossless compression. Similarly, when we have executable files or computer programmes that we may want to compress, or perhaps medical data, all sorts of things where it's really important to keep the precise file that we had to begin with then we want to use lossless compression.

54:41 - Could we cope without computers?

Could we cope without computers?

This week, we contemplate a rather doomsday scenario.

Martin - Hi. My name is Martin Harris and I live in Chilton. My question is, we rely increasingly on computer networks. If a solar storm or malicious virus hits the network, could our current civilisation's dependency on computer networks be damaged irreversibly?

Hannah - So, could it spell the end of the world as we know it if all computers across the world crashed or could it lead to worldwide liberation? First up, what could cause our computers to conk? Listener Evan Stanbury from Australia got in touch with this.

Evan - A widespread computer virus could impact our computer networks temporarily perhaps for hours or days. Geomagnetic storms caused by solar flares can damage the electricity grid and cause widespread blackouts lasting days or even weeks especially in regions near the poles. High altitude atomic explosions can cause an electromagnetic pulse that could shut down the electricity grid and fry the electronics in our computers, mobile phones and car ignition systems.

Hannah - Not invincible then and computers don't just sit on our desks as Mike Muller from computers chip design company, ARM explains.

Mike - There are lots of different types. They're in mobile phones, anti lock brakes, TVs, traffic lights, railway signal systems, and maybe the digital radio that you're listening to this on, and they're all joined together by computers and satellites, and once that run the internet.

Hannah - So, what could the effect of a computer wipe-out be? Stuart Coulson from the online security company Secarma said this.

Stuart - I think the biggest fear would be medical devices. If they were to fail, well that's loss of life straightaway, critical care patients, they're unlikely to survive, alarm systems for vulnerable people, they're going to fail.

Hannah - Plus, there's this.

Jonathan - Hello. My name is Jonathan Bowers. I'm the MD of UK Fast, an internet hosting company. I think the biggest area that would be affected would probably be the financial sector. There'd be no more international trade, so day payments, things like immediate cash transactions or a stock exchange. We'd have well, what you might call a socio-economic breakdown. Hannah - Plus, on the forum, listeners got in touch, highlighting computer crashes causing mayhem on the roads, transport failures, system shutdown, and inevitable food shortages. But Jonathan also adds.

Jonathan - On lighter note, evenings would be interesting with no more TV and an end reality TV at last. So in that way, it possibly is a good thing.

Comments

Add a comment