This week we're getting inside the workings of the next generation of chips that are set to pack a bigger computing-punch but at a fraction of the energy-expenditure of todays' models: CTO Mike Muller joins us to explain the revolutionary technology that leading microprocessor-maker ARM is developing. Also, energy-efficient world-wide computing - we find out how distributing data-processing demands around the planet can turn waste energy into useful computations, simultaneously saving CO2 emissions, and in the news this week, a new malarial mosquito threat, rejection-free artificial blood vessels and the electric cap that helps users solve maths puzzle they previously found impossible.

In this episode

01:47 - New Malaria-susceptible mosquito found

New Malaria-susceptible mosquito found

Researchers this week have reported in the journal Science the existence of a new subspecies of mosquito, that is highly susceptible to the most dangerous form of malaria parasites.

Michelle Riehle and her colleagues from the Institut Pasteur in Paris and the University of Minnesota, collected larvae of Anopheles gambiae mosquitoes from pools around villages in Burkina Faso in West Africa over the course of two years.

They found that there were two genetically distinct groups of mosquito larvae in the pools - one group whose genetic profile clustered with the profiles of indoor-living mosquitoes captured from inside people's houses, and a separate subgroup that have not ever been captured inside.

They found that there were two genetically distinct groups of mosquito larvae in the pools - one group whose genetic profile clustered with the profiles of indoor-living mosquitoes captured from inside people's houses, and a separate subgroup that have not ever been captured inside.

This second group, that the researchers described as behaviourally exophilic - basically they live outside - were then grown in the lab and fed blood infected with Plasmodium falciparum, the most deadly form of malaria to infect humans. The researchers found that 58% of the exophilic group became infected compared to 35% of the known indoor-living subspecies, although it's not clear whether this translates to being more likely to infect a person, and as yet there is no evidence that the exophilic subgroup feeds from humans.

The worrying thing here is that a lot of what we currently know about mosquito vectors in Africa has come from the study of mosquitoes captured inside houses and buildings, and much of the malaria control measures in the area concentrate on the indoor mosquitos - so strategies like spraying the insides of houses with insecticide and using mosquito nets. Combined with the discovery that this new subspecies is more susceptible to being infected with the Plasmodium falciparum parasite, it may mean strategies for dealing with malaria in West Africa need to be rethought.

04:27 - Hard graft yields new artificial arteries

Hard graft yields new artificial arteries

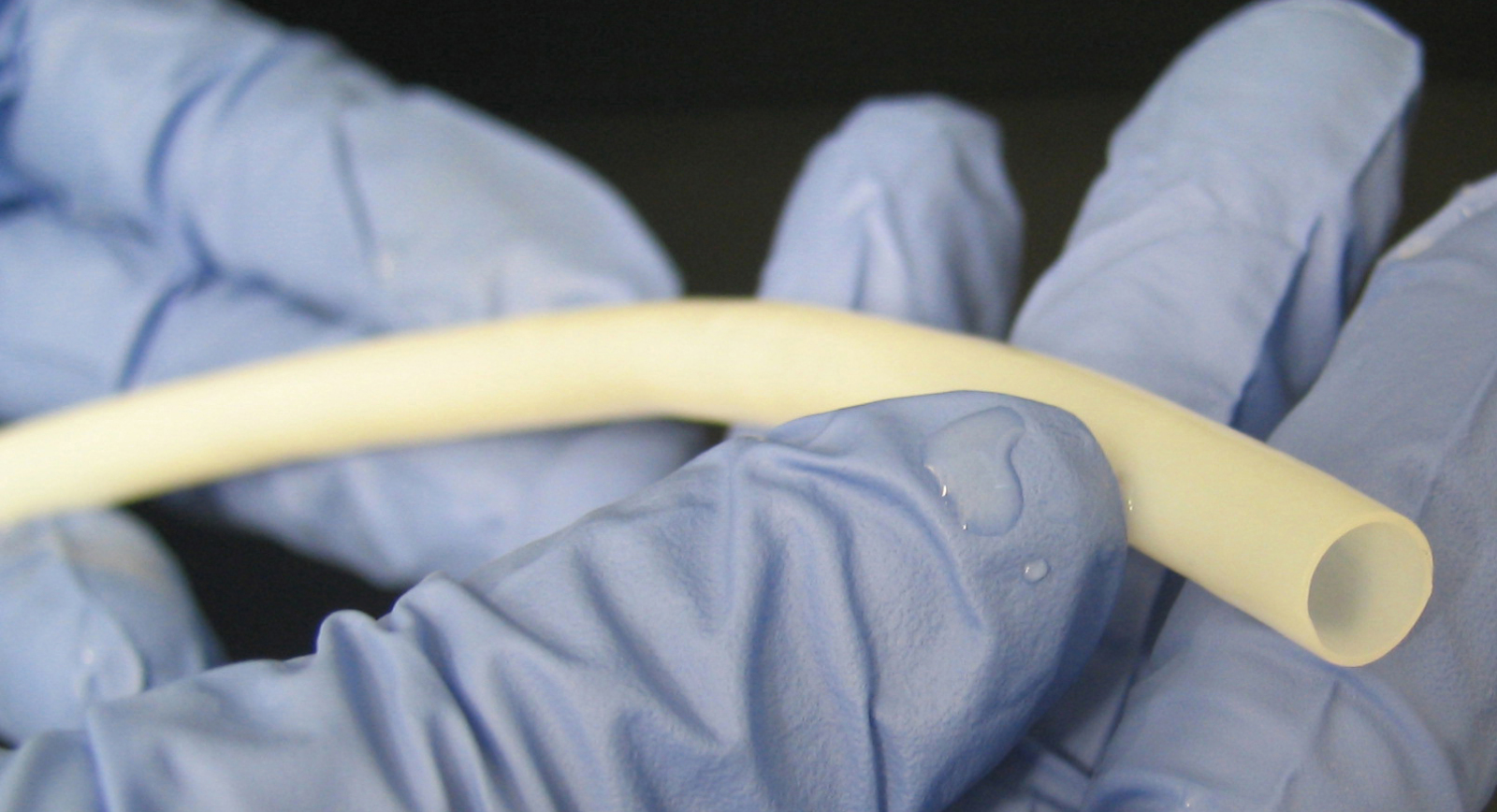

The field of arterial graft surgery looks set to take a big leap forward thanks to a breakthrough by US scientists.

Writing in Science Translational Medicine, Shannon Dahl, a researcher with the North Carolina-based bioengineering company Humacyte, has described a method for making large, very strong, biocompatible artery grafts. Until now, if a patient required a replacement artery - for instance to bypass a blocked coronary vessel - the ideal source was usually one of their own long saphenous veins, which run up the legs. And although for larger graft requirements - such as repairing an aortic aneurysm - PTFE (polytetrafluoroethylene) replacements are very effective, this material is unsuitable for smaller vessels because it tends to become blocked with blood clots after a matter of months.

In general, except where pieces of a patient's own vessels are used, most vascular grafts (75%) work for fewer than three years. Motivated by this relatively poor performance, Dahl and her colleagues have taken a different approach - by growing new arteries.

In general, except where pieces of a patient's own vessels are used, most vascular grafts (75%) work for fewer than three years. Motivated by this relatively poor performance, Dahl and her colleagues have taken a different approach - by growing new arteries.

The method pioneered by the team involves starting with a tubular scaffolding made from a biodegradable material called polyglycolic acid (PGA). These scaffolds are immersed in a culture solution containing smooth muscle (SM) cells of the type normally found in the walls of blood vessels. Over a seven to ten week period the smooth muscle cells proliferated over the PGA scaffold, dissolving and replacing it with a matrix of connective tissue. Next, detergents were used to "decellularise" the nascent vessels, leaving behind just a tough connective tissue "tube".

Pressure tests showed that these structures could withstand over 3000 mmHg, fifteen times normal human blood pressure. Next, to verify their clinical potential, the team implanted the grafts, which are dubbed TEVGs - Tissue Engineered Vascular Grafts - into baboons, cats and dogs, where they remained long-term patent in over 80% of cases. Subsequent study of the implanted grafts showed that the animal's own endothelial cells, which line the vasculature, had grown in to coat the interior surfaces of the grafts.

Critically, there was also no evidence that the animals' immune systems were reacting to the implanted vessels. This suggests that the technique could be used to rapidly produce safe, non-immunogenic replacement vascular segments for human recipients, including patients requiring vascular access for kidney dialysis, and in large amounts.

08:26 - Electrical brain stimulation boosts lateral thought

Electrical brain stimulation boosts lateral thought

Researchers in Australia have come up with the electrical equivalent of a thinking cap, capable of broadening the mind. Writing in PLoS One this week, University of Sydney scientists Richard Chi and Allan Snyder used a technique called transcranial direct current stimulation (tDCS) to significantly boost the problem-solving abilities of a group of 60 volunteers. The two researchers set out to test the hypothesis that the more we know, the more close-minded we are; in other words, the better informed we become, the less intuitive it is to "think outside the box". This, they reasoned, is down to a brain  region in the anterior temporal lobe, which has previously been associated with insight and attaching novel meaning to experiences and situations. Damage to this area on the dominant (usually left) side of the brain in patients with dementing diseases, head injuries and strokes has been shown to lead to the emergence of latent artistic and other creative talents, suggesting that, on the dominant side of the brain, it probably plays a role exerting a top-down or hypothesis-driven style of cognitive reasoning.

region in the anterior temporal lobe, which has previously been associated with insight and attaching novel meaning to experiences and situations. Damage to this area on the dominant (usually left) side of the brain in patients with dementing diseases, head injuries and strokes has been shown to lead to the emergence of latent artistic and other creative talents, suggesting that, on the dominant side of the brain, it probably plays a role exerting a top-down or hypothesis-driven style of cognitive reasoning.

Damaging or deactivating it, on the other hand, therefore unleashes the more creative non-dominant, right hand side of the brain. The researchers therefore asked their particpants to solve some logic puzzles that were arranged in three categories of increasing difficulty and involved moving matches according to a simple rule in order to complete a set of sums. During an initial training session, the subjects solved puzzles that all involved the same strategy. This knowledge, the researchers expected, would then make it trickier for the participants to solve the subsequent puzzles, that required a different approach. The volunteers were divided into three groups. In one group a small current was applied imperceptibly across the temporal region of the head with the left side negative and the right side positive. This configuration has been shown to inhibit underlying structures on the left. A second group were rigged identically except that the right side was made negative and the left side positive. A third group were controls and received no stimulation.

The results showed a powerful effect, with subjects receiving the left-negative stimulation showing significantly more success solving the subsequent puzzles than either the other stimulation group or the controls (50% success versus 20% respectively). In other words, those subjects in which the left anterior temporal region was inhibited were much better at developing original cognitive strategies to solve the novel problems. Quite literally, these individuals were thinking better outside the box. As the researchers point out "predisposition to use contextual cues from past experience confers a clear evolutionary advantage in rapidly dealing with the familiar, but this can lead to the mental set effect or over-generalisation." Snyder and Chi also quote John Maynard Keynes who pointed out in the 1930s, "The difficulty lies, not with the new ideas, but inescaping from the old ones, which ramify into every corner of our mind." And it looks like he was right!

15:04 - Dry Amazon rainforest could contribute to climate change

Dry Amazon rainforest could contribute to climate change

Back in 2005, the Amazon, which covers nearly 7 million km squared of South America, suffered a drought which was billed as a 'once in a century' event. Droughts like this are caused by increased sea surface temperatures in the atlantic ocean.

Now a team led by Simon Lewis from the University of Leeds have analysed data from another drought, in 2010, and concluded that this was in fact even more serious, and that these successive droughts could start causing big global problems.

They found 57% of the Amazon region had low rainfall in 2010 compared to 37% in 2005, and also that the water stress on trees was more severe. They worked this out using a measure of drought severity called the maximum climatological water deficit, that correlates with how likely trees are to die from a drought, which they calculated by taking away the estimated transpiration of the trees - that's the amount of water they lose from their leaves - from the lowest amount of water input to the region. So doing this they estimated 3.2km2 of forest in 2010 would have suffered a level of drought enough to cause significant tree death compared to 2.5km2 in 2005.

So why is this important? Well the Amazon acts as a giant carbon sink - sucking CO2 out of the atmosphere and locking it away in plant matter, which has helped to act like a buffer against all the extra CO2 humans have been pumping up into the atmosphere. But if droughts like the 2005 and 2010 events keep happening, and they will if sea surface temperatures continue to rise as they have been in recent years, more trees will die. This means that not only will the trees stop taking in CO2, but they will actually start to release it as a result of being broken down by microbes. Another effect of increasing temperatures, combined with a build up of dead plant material is an increase in the likelihood of forest fires, which can release huge amounts of CO2.

Simon Lewis and his colleagues suggest that this could become a positive feedback cycle, resulting in major forest loss and the loss of an important carbon buffering system, which could have major global implications.

17:47 - Programmable packaging for cells

Programmable packaging for cells

Scientists have developed the cellular equivalent of a shrink-wrapping system which is capable of packaging up chemicals inside the cell.

Writing in Science, Zurich-based researcher Donald Hilvert and his colleagues added a gene encoding the enzyme lumazine synthase to E. coli bacteria. This enzyme, borrowed from another bacterium that inhabits hot springs, is normally involved in the synthesis of one of the B vitamins. But it also self-assembles into hollow, 20-sided icosahedral cages or "capsids" capable of carrying a cargo.

The breakthrough achieved by the Zurich team was to "functionalise" the cages so that as soon as they were assembled inside a cell, they would automatically grab a specific chemical and package it inside themselves. This was achieved by altering a small number of the amino acid building blocks from which the cages are built to give them an additional negative charge. Then, by adding a positively-charged chemical group to the substance they wanted to sequester inside, the electrical attraction between the two took care of the rest.

achieved by altering a small number of the amino acid building blocks from which the cages are built to give them an additional negative charge. Then, by adding a positively-charged chemical group to the substance they wanted to sequester inside, the electrical attraction between the two took care of the rest.

The concept was proved by also adding the gene to the same E. coli for the protease enzyme made by HIV. This is normally toxic to any cell that makes it, but by locking it away inside the molecular cage inside the cell, the bacteria could safely make and package the substance without harm.

Even better, as the bacteria grew over a series of generations, they naturally evolved to become 10 times better at doing the task. This trick, say the researchers, is the first step towards designing bespoke molecular cages that can be used to package biosynthesised substances, including chemicals that have previously proved difficult for reasons of toxicity or stability, or are troublesome to purify.

20:01 - Plant Earth Online - Sounds under the Sea

Plant Earth Online - Sounds under the Sea

with Richard Hollingham, Steve Simpson

Sarah - Documentaries filmed underwater tend to give the impression that it's a quiet, even serene environment beneath the waves, but stick a microphone in the sea and you'd be amazed what you can hear. One man who's been doing just that is Bristol University Fish Ecologist Steve Simpson and he's found that many marine species use these underwater sounds to find their way around. Richard Holligham went to meet him...

The sounds of a coral reef

Steve - That's a really healthy coral reef in the Philippines. It's a marine protected area, it's very well protected from fishing. First of all, there's a background crackle. It sounds almost like the sound of heavy rain on the pavement.

Richard - Or bacon in a pan or something.

Steve - Or frying bacon. That was how it's first described by mariners. That's the sound of snapping shrimp. They produce a microbubble in their  claw that they fire forwards. The bubble implodes when it hits the water and that creates a very loud snap.

claw that they fire forwards. The bubble implodes when it hits the water and that creates a very loud snap.

Richard - Now the other sound in there was almost a croaking sound.

Steve - That's right. So, there's then a whole suite of diverse sounds that fish have learned to make. So they can be croaking sounds, chirping noises that sound almost frog-like, and they do that to communicate with each other, perhaps to assess whether they're a suitable mate or for territorial behaviour.

The sounds of a coral reef

Steve - The logical next step for our work was to take our recordings of coral reefs and start to look at what information that recording contained. So the first study that we did then was to split the recording into the higher frequency noise, that is the crackling snapping shrimp, and the lower frequency noise that was the fish popping and chirping.

What we found was that larval fish were actually attracted to the higher frequency crackling noises. So we think that might be a cue that brings them into shallow water environments. When we play the sound on artificial reefs, the lower frequency noises are then used by the juvenile and adult fish that move around at night, trying to find habitat.

We've actually just got a paper out this week which shows that when you take recordings from different types of habitat, so we take recordings from an Outer Barrier, we take recordings in a lagoon, and we play that next to artificial reefs, the fish that you would find on the lagoon arrive on that artificial reef. The fish that you would find on the outer reef, you then find settling onto reefs playing those noises.

Richard - Why is this important? I mean, it's interesting, but why is it important?

Steve - The reason that I got interested in it was actually from a much more applied perspective in that I was interested in coral reef fisheries, particularly in fisheries in developing world situations where there is very limited information on what is being caught; it's a multi-species fishery, it's normally artisanal, so the fish are landed on the beach. So to try and actually model or manage populations of fish is very difficult if you've got no idea how the actual whole life cycle works.

Richard - Now your latest research comes back to those crustaceans, the snapping sound that we were hearing at the beginning.

Richard - Now your latest research comes back to those crustaceans, the snapping sound that we were hearing at the beginning.

Steve - So there are some crustaceans that do have a pelagic larval phase and then settle on to a coral reef environment. Crabs, lobsters are good examples of those, and we find that late stage larvae of crabs are attracted to coral reef noise, so that's great. But there are lots of crustaceans that live around coral reef environment in an otherwise fairly low nutrient environment; coral reefs are quite high in nutrients. So, to be able to forage on the outskirts of coral reefs, without encountering the millions of mouths that come out at night in every coral polyp, in every planktivorous fish, to find some way that you could live near to the coral reef without landing on it would be very useful.

We find that lots of groups of crustaceans that either sit in the seabed during the day time and come up into the water column at night to feed, or that are constantly in the planktonic realm, are able to detect coral reef noise, but stay away from it. So it becomes a cue that's not just used as an attractive cue but it relates to the ecology of the animals.

25:35 - Naked Engeering - Designing Computer Chips

Naked Engeering - Designing Computer Chips

with Dr Robert Mullins, University of Cambridge

Meera - This week, Dave and I have set out to investigate just how computers are engineered. We've come along to the computer laboratory at the University of Cambridge. Dave, just to start off with, what will we be looking at in terms of computers?

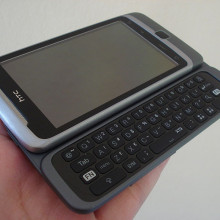

Dave - If you take apart any piece of modern equipment from a [mobile] phone to a telephone, to even a washing machine, you'll find little black rectangles called computer chips, and that's where all of the complicated controlling things are happening inside these little blocks.

Meera - Here to tell us a bit more about these computer chips is Dr. Robert Mullins, lecturer in Computer Science at the University of Cambridge...

Robert - So if we take those black pieces of plastic and we were  to remove the plastic with some acid or something, we'd see, in the centre, a little piece of silicon, about 1 centimetre on the side, and on top of that would be a tiny little electronic digital circuit, and that's the computer chip. So the circuit is printed on top of the silicon chip using a process called photolithography, very similar really to a photographic process. We effectively print a layer of transistors, it's what we build our digital circuits out of, and then on top of that layer of transistors, we print layers and layers of interconnect.

to remove the plastic with some acid or something, we'd see, in the centre, a little piece of silicon, about 1 centimetre on the side, and on top of that would be a tiny little electronic digital circuit, and that's the computer chip. So the circuit is printed on top of the silicon chip using a process called photolithography, very similar really to a photographic process. We effectively print a layer of transistors, it's what we build our digital circuits out of, and then on top of that layer of transistors, we print layers and layers of interconnect.

I always think of a transistor as a short pipe with a tap in the middle, and the tap is what we call the gate, and either end is called the source and drain. We can turn the tap on and off by applying a voltage to the gate terminal. That changes the electrical properties in the material under the gate and then they enable the transistor to conduct or not.

Dave - A transistor is essentially an electronic switch, so you can use a small current or small voltage to turn on or off a much larger one on the other side of the transistor. How do you connect these together?

Robert - It's relatively straightforward to put the transistors down, but we then need to interconnect these huge numbers of transistors. So we need lots and lots of layers of metal to do that.

Meera - But how do all these transistors work together inside a computer, when you're giving a computer an instruction to do something?

Robert - At the lowest level, we take a few transistors, maybe 6 or 8 transistors, and put them together to build a single memory cell or a single logic gate.

![]() Dave - These logic gates are little groups of transistors which can do a variety of little operations, so you might have a logic gate with two inputs and one output. Some of them are called 'AND' gates so if both inputs have a positive voltage on them the output will be positive; Or it might be an 'OR' gate, so if one or other of the two inputs are positive then the output will be positive. So there's a whole variety of this different logic gates.

Dave - These logic gates are little groups of transistors which can do a variety of little operations, so you might have a logic gate with two inputs and one output. Some of them are called 'AND' gates so if both inputs have a positive voltage on them the output will be positive; Or it might be an 'OR' gate, so if one or other of the two inputs are positive then the output will be positive. So there's a whole variety of this different logic gates.

Meera - But how then do you step up from there to a whole computer?

Robert - So in the same way as we build gates out of transistors, we build larger circuits. For example 'adders'; They're able to just take two inputs and add their numbers together. We build those out of logic gates. Once we have the more complex building blocks, so larger memories, components that are able to add numbers, then again, it's another layer of abstraction to interconnect these components to build complete processors.

Dave - So one group of people is designing transistors and other group will then put those together to form gates and then the gates get to put together to form adders and then another group will be putting the big chunks together to form the whole chip.

Robert - That's right.

Meera - Now what about the size of these chips and transistors? Things have changed over time, so the original computer chips that were designed were much bigger than the ones that we see today...

Robert - And it's one of the reasons why computers get a lot faster very quickly. Smaller transistors mean that they switch faster. It also means we can put more on a single chip. It means we can build more powerful, more complex processors. So we can illustrate this point by looking - I've got a little - this is one of the early ARM processors.

Meera - This to me looks reasonably small. It's about 7 mm by 7 mm. How many transistors are on this?

Robert - On this early processor, about 25,000.

Meera - And so, how does this compare to a chip that we would see today?

Robert - So incredibly, if you manufactured something, a simple ARM processor in the cutting edge process today, the processor would fit in a square 70 microns by 70 microns, and that's small enough to print these processors on the edge of a human hair.

Meera - So that's about 10,000 times smaller!

Robert - Yeah. We're really able to exploit that scaling to make processors go faster.

Meera - So a computer is made up of these very tiny chips, but there are many instructions that you give a computer, or many processes going on. Are different sets of chips, or different microprocessors, dealing with different things?

Robert - If we look at a complete computer system, there are actually many different types of chip in that device. So, some will be microprocesssors like we've been talking about, others will just be dedicated to memory, to storing data or instructions. By bringing everything together on one chip, things become faster, it also becomes lower power as well.

Dave - As transistors get smaller and smaller, and smaller, eventually you're going to get the point where the transistors are going to have just 4 or 5 atoms in them.

Robert - That's right. So as we build atomic scale devices then what's called device variation really causes major problems. So two transistors manufactured next to each other on our piece of silicon can have very different characteristics. Before then, I think one of the major performance limiters will be power consumption.

Dave - This power is actually used for the physical switching of the transistors on and off?

Robert - That's right. So we dissipate power when we switch transistors on and off, and to execute each instruction, we need to switch a certain number of transistors on and off. As we build more complex processors, the number of transistors that we have to switch on and off per instruction increases and that means we have to dissipate more energy. Also, applications have changed; If you think about what people want in terms of computing devices, now they want mobile devices, they want it to run on batteries, if your processor is consuming a large amount of power, that's going to reduce your battery life. We're also building large data centres with large number of processors in them and I can show you the problems of trying to put a large number of processors in a single room, if I show you one of our small computer rooms...

Meera - There are about 100 computers in here and the sound of the  air-conditioning alone to remove the heat from here shows just how much energy is needed!

air-conditioning alone to remove the heat from here shows just how much energy is needed!

Dave - For every kilowatt hour of energy you're using in the computers, it probably takes about another kilowatt hour to get it out through the air conditioners, making the problem twice as bad...

Robert - And this is a fraction of the size of a modern data centre, and of course the running cost of the data centre, a big part of it is the total electricity bill which comes from the power dissipated in the processors.

Meera - Right. Now that I can hear you again Robert, tell me a bit about your research - you're also trying to make chips that will need less power?

Robert - Yes. The approach we're taking is to put hundreds or even thousands of very, very simple processors on a chip and then design this in a way that they can be specialised for a particular application. It's this specialisation that enables us to reduce power.

Dave - This is because the general purpose processor has got to be able to do everything, so if you're doing any one thing, lots of it is essentially being wasted all the time.

Robert - That's exactly right.

Meera - So you're really having to redesign and really think about how a computer is actually made, and create different or new types of chips?

Robert - Yeah and I think the combination of the new applications that we're seeing for computers at the moment make this one of the most exciting times to be learning about computer science, or trying to solve these problems.

33:50 - Energy Efficient Microchips

Energy Efficient Microchips

with Mike Muller, ARM Cheif Technology Officer

Chris - If you own a mobile device, whether that's a phone or a camera, or a portable music player, then there's probably a 99.9% chance that at least some of the computer chips running inside that device are designed by a company based here in Cambridge called ARM. And if you owned a BBC microcomputer in the 1980s, then you've also come into contact with one of their other products.

They're actually a world leader in developing digital solutions and two of the projects they're working on at the moment are ways to make computer chips smaller and much more energy efficient. Mike Muller is ARM's Chief Technology Officer. He's here with us today. Hello, Mike.

Mike - Hiya.

Chris - Thank you for coming in and joining us on the Naked Scientists. First of all, what actually determines how energy hungry a chip is?

Mike - Well I guess there are three main things. The first is, how big is it? The bigger it is, the more transistors it's got, the more power it takes. So a lot of what we do is how you get those compromises and design something that's big enough for the task in hand, but doesn't overengineer things because that takes excess power.

Chris - Why does ARM account for 99.9% of the marketplace? Why have you got that huge dominance? What is it about your technology that makes it so attractive to all those different industries?

Mike - Well it's two things. The technology is part of it, but possibly more important is the way we went about our business, which is actually not to manufacture anything and just to license our intellectual property to people who do, letting them specialise in what makes them good, and lets us focus on what we do, which is design low-power microprocessors.

Chris - But at the same time, the design must have something going for it, or all those licensees wouldn't use the technology, so what is it that they're going for?

Mike - We've put in a lot of work for how you come up with new techniques to save power. So, a lot of people in the past have focused on how do you make a chip go as quickly as possible. If you try and make it go as fast as it can, you end up actually burning a lot of excess power. If you back off just a little bit and make slightly different compromises, you can come up with solutions that take a lot less power.

Chris - And of course, when we're talking about things people want to carry around with them, the batteries are the vast bulk of the weight and were the thing holding back the technology in the early days because the more powerful you make them, the more energy they're going to get through; so they're going to burn off batteries more quickly. So, if you've got more efficient chip designs, that's got to be a good thing.

more powerful you make them, the more energy they're going to get through; so they're going to burn off batteries more quickly. So, if you've got more efficient chip designs, that's got to be a good thing.

Mike - Absolutely. I think, recently, there's been a change from people designing for what's the absolute power you can have, to [desiging for] low power devices, for batteries. And in the future, it's actually not the battery that's the issue, it's the available energy. When you start to have really tiny devices embedded into things all around you, you're scavenging energy from the environment, you actually need to worry about what's the available power, and that could be very low indeed.

Chris - So how can we get the energy requirements of the chips of tomorrow down? What sorts of technologies are you guys working on, in order to make that a reality?

Mike - One of the techniques we're working on involves lowering the voltage that a chip runs at, because power is actually proportional to voltage squared; so it's one of the most important things you can do to lower power. If you took

the article before, when somebody designs a process, they work out how it works then put a little margin of safety. Then you heard about how you take the transistors and you build a few gates, and the people that design that put in a little more margin of safety. Then we come along and design our processors and put in a little more margin of safety, and then somebody puts it all together in the chip. What you end up with is a safe chip that you know works all the time and it possibly is then specified to run at 3 volts. In reality, it might run at 2 ½ volts, and the difference between 2 ½ volts and 3 volts is 50% in power.

Chris - Because it's squared.

Mike - Because it's V squared. So, what you really want to do is run that chip at 2 ½ volts. People who over-clock their PC sometimes say, "I actually know that this chip can go faster than it does. I'll turn it up and run it faster." And the problem they have is, on a hot day, it might get too hot and then it stops working. So the idea is something we borrowed from the mobile phone industry where they said, "radio signals are really noisy. We have to have error recovery because you get glitches and noise." And so when you're with a mobile phone, transmitting to a base station, you actually turn down the power of the transmitter, until it starts making mistakes. The error recovery cuts in, and you can then recover that and you don't notice, and then it turns the power up as you move further away.

Chris - So it's dynamic error recovery, isn't it - your chips will run at a threshold where they're just about not making any mistakes, and you're engineering that into the chip, rather than into a software that's running through the chip.

Mike - That's absolutely right and most digital designers are really uncomfortable with the idea that their chip will make mistakes. So, we've designed a processor which will correct it's errors dynamically which allows you to turn it down until you find that point where it just starts making mistakes. As long as the power to recover from those errors is better than the power you've wasted, you're ahead of the game.

Chris - The benefit of doing this kind of thing would be that not only are you using less energy because the voltage is down, but also, that means you can actually make the chip bigger and do more, so actually, the device it's in, can become more powerful without having to burn off more energy.

Mike - Well, we're always in a race; the software guys want to write more complicated software and therefore they want more power, while we'd like to have a static world where you could make things simpler and smaller. So there's always a balance between actually designing a lower power device and then finding somebody that's just made it all run faster and used that all up again.

Chris - So when a company like ARM says, "Right. We're going to come up with this sort of design." How long from the concept to it appearing in a phone like mine, sitting here on the desk?

Mike - Well we started work on this with the university... about 7 years ago... was when you could point to the first germ of an idea, and I reckon it's going to be another 3 or 4 years until you see those kinds of things in real products. So it's a long time from good idea through to actual product in the market.

Chris - When we do see it in the market, what sort of difference will it make? What will you be able to say to designers of equipment that means that they will say, "Well yes, okay. We'll definitely invest in this and this is the benchmark in the future?"

Mike - You'll probably, as a user, never know. It'll be just one of these techniques that means the next phone you buy has slightly longer battery life and does even more things than it did before, and you'll just take it for granted that that's technology marching on its forward progress...

40:22 - Computing With Waste Energy

Computing With Waste Energy

with Professor Andy Hopper, Cambridge University

Sarah - As the internet and our reliance on computers grows, so does the amount of energy that this industry consumes, which means significant CO2 emissions. But is there a way to make the process more environmentally friendly? Computer Scientist Professor Andy Hopper from Cambridge University has been working on a way to turn waste energy into useful computer processing around the planet. Hello, Andy...

Andy - Hello there.

Sarah - To put some numbers on the problem first, how much energy are we looking at and how much CO2 does running the internet consume?

Andy - Well, about 3, 4, 5, or 6% of global energy use goes into computing one way or another, so it's not a huge number at the moment, but it's going up fairly quickly.

Sarah - And what sort of volume of CO2 is that? I mean, compared to other industries, how does that then compare?

Andy - Well, the aviation industry would be probably two or three times that, so we're catching up on it. Maybe in due course, it'll be comparable.

Sarah - What's the solution that you've been working on to help counteract this?

Sarah - What's the solution that you've been working on to help counteract this?

Andy - Well, what we're trying to understand is the notion of taking computing to energy sources and using energy which would otherwise be lost because it cannot be transported to the place where it can be used. So the notion is to transmit bits around the planet rather than energy around the planet, and see if you can be a kind of a sponge for either surplus energy or, perhaps more importantly, energy that cannot get to houses or to trains or to situations where it can be used in a more obvious fashion.

Sarah - So this means running processes in an area of the world where it's not being used locally, so it's night-time and there's not a whole lot going on, and you can then use that downtime for an area where it's during the day, and you've got a lot of traffic going on.

Andy - That's right. So it's either the windmill has speeded up somewhere or it's night-time and there isn't much local use for whatever reason. What we're trying to find out is to what extent is this possible, what actually is the energy cost of shipping the data and the problems to these potentially remote places because it must be a win rather than lose. But fibre optic communication is a marvellous technology because essentially the further you go, the more energy you don't really use. So you can go much, much further without paying an energy price. It's almost like magic. And then seeing at what level of granularity, what sort of problems, what sort of tasks can be done in this way, and soak up this surplus energy, so that in due course, when 10 or 15 or 20% of the world's energy is going on computing, we can say, "That may be 3 or 4% of energy that would otherwise be lost."

Sarah - What sort of tasks are you looking at that will be suitable? I'm guessing ones where you want an instantaneous reaction, so e-commerce and things like that, they aren't necessarily so suitable as things where you don't necessarily need that instant feedback?

Andy - That's right. So anything that requires an instant response, that is interactive, is less suitable to this sort of approach. But nevertheless, you can go a reasonable way, it's constrained by the speed of light, so it's not that it has to be just around the corner. It might be at the other end of your country, up in Shetland or wherever. But nevertheless, we're trying to divide up the tasks into those that are interactive and require an immediate solution, and perhaps others which are cataloguing tasks, recognition tasks which can be done more slowly, and therefore, amenable to this sort of treatment.

Andy - That's right. So anything that requires an instant response, that is interactive, is less suitable to this sort of approach. But nevertheless, you can go a reasonable way, it's constrained by the speed of light, so it's not that it has to be just around the corner. It might be at the other end of your country, up in Shetland or wherever. But nevertheless, we're trying to divide up the tasks into those that are interactive and require an immediate solution, and perhaps others which are cataloguing tasks, recognition tasks which can be done more slowly, and therefore, amenable to this sort of treatment.

Sarah - How realistic is this? You mentioned the advent of fibre optics. Are our current networks able to deal with this? Are they fast enough at the moment?

Andy - Well, to some extent, it is already done, in that you place server farms close to cheap energy sources and ship data there and get the answers back. But you know, it is appropriate to be slightly fanciful, not completely unrealistic, but slightly fanciful in one scenario, and looking maybe 10 or 15 years out where the networking around the world is perhaps somewhat different. There is much more fibre communications. We have server farms in perhaps what today are unusual places, in the middle of the oceans where it's very windy, from which you most definitely couldn't get the energy back to heat a house, but you might be able to do the computing jobs.

Now you have to be very careful about this. You mustn't cheat, so you have to take into account the energy cost of getting the computing there, so to speak, building that plant, including the embedded energy cost seen in the manufacturing of it, maintaining it, and then recycling it or shutting it down in an appropriate way at the end of its useful life. So you have to take the whole picture, but nevertheless, with that in mind, and the notion that distance doesn't matter so much with fibre optics, I think the world may be a different place. One other comment that follows on from

Mike's contribution earlier, I think it's energy proportional computing and communications that is very, very important going forward. That, rather than over provisioning, over engineering, over dimensioning which has traditionally been the case, and is now being less important in mobile communications, spreads to everything. Every computer on the planet [should be] either doing useful work or is shut down or in at least in very deep sleep mode. Now, one way of helping to understand this is looking at this and chasing the energy, because if you move a job somewhere else then the one that was originally destined for that job can be switched off.

Sarah - What is the scale of the saving in energy that we're looking at?

Andy - Well to be quite honest, I don't know! That's the challenge we have because to be realistic about this, we have to postulate some kind of model of how the networks will work, take current jobs, divide them up, and so on. But initial results suggest that it's not going to be just 1 or 2%. There is a reasonably good opportunity here for improving the way computing works and so, ask me back in about 5 year's time and I might not hesitate to give you an answer.

Sarah - I suppose, even though it may be a certain amount of percentage now, with time moving on, it'll become more important as we become more reliant on the internet, but will we expect to see this sort of thing being implemented any time soon?

Andy - Well, the beginnings of it are happening now, but let me just point out another kind of win-win. Not only using energy that would otherwise be lost, but also in many cases, using that energy to compute things about the real world which save even more energy, optimising the physical world by using energy in the digital world and that energy itself, being so to speak 'free lunch' energy, now that would be a wonderful scenario. But as I say, I am paid to be slightly fanciful, and there it is!

Do we really need faster computing power?

We put this question to Professor Andy Hopper and Mike Muller...

Andy - There is a tension on the one hand by having more sophisticated programming languages and therefore programs using them. It is easier to write some kinds of software. It is possibly easier to address large problems. But on the other hand, that introduces inefficiency, sometimes very substantial inefficiency. So, the challenge is to provide programming environments and programming languages which, on the one hand, provide the high level abstractions that make it easier to program and not make huge mistakes, so to speak, but, on the other hand, have a more direct connection to the processor itself and are more efficient in the use of resources, and in particular have energy proportional computing as their underpinning requirement.

Chris - Mike, did you want to point out anything about the processor manufacturer's perspective on this in terms of how you design your chips around making it easier for people to write software that caters to that sort of thing?

Mike - Well we certainly try to address the new languages that are coming that make it more efficient. I think, to follow on, the example in servers is that the best way to save power in servers is perhaps to do half as much work, and that's a programming challenge where you could find those kinds of savings. If you type something like "Naked Scientists" into your favourite search engine...

Chris - Better still, Mike, you can now type "Mike Muller Naked" into your favourite search engine and...

Mike - Yeah, thank you for that. It'll come back and say "so many results found in so many seconds." What they're starting to do is, with popular searches, to look at the question: What happens if I just search half as hard? Did it actually change how many results people clicked on? So, you actually dynamically change how much searching you're doing because, again, you may be over-searching and providing results that are better than you need.

Are newer computers more efficient than older ones?

We put this question to Mike Muller of ARM...

Mike - There's no doubt, newer computers are more efficient than older ones and they get better every year. It's the perennial technology question of, "If you wait just a bit longer, you can get one that goes faster, takes less power, and will be a bit cheaper." But you can always wait forever...

Is there a practical limit to how fast computing can become?

We put this question to Mike Muller and Professor Andy Hopper...

Mike - I think computers are slowing down, they're not getting faster and faster. The real challenge is how you make things go in parallel. How do you divide things up, and actually have multiple computers working on the same problem at once? That's probably the way you actually push performance in the long run.

Andy - We are approaching what people have described as the silicon endpoint. Mind you, the silicon endpoint seems to have a half-life of about 5 years! That is the point at which we really don't make any more substantive progress. And so, the speed of individual chips will asymptote and will be limited. Now the parallel point is important, but that's an old cherry, and how to make things [process] in parallel is very difficult...

51:13 - Is sending an e-card environmentally friendly?

Is sending an e-card environmentally friendly?

We put this to Dr Andy Rice, a Computer Scientist at the University of Cambridge, and Professor David MacKay, Chief Scientific Adviser to the Department for Energy and Climate Change...

Andy Rice - I think this is quite a good question and what you need to think about is something called "lifecycle assessment". This is basically an idea of trying to think about all the different stages in the production of different goods and services, and what they actually cost the environment. I had a look around; there aren't many studies on this for greeting cards, but I did find some work which considers the impacts of newspapers. The authors of this paper - it was a Swiss study - found that if you have a weekday newspaper, it costs about the same as reading an online one, but with huge amounts of uncertainty and lots of assumptions.

Say, for example, you change the electricity mix - whether you have a lot of fossil fuels in it or not: the online one moves a huge amount. But if you consider food, for example, the difference in footprint between a steak and a risotto is about 200 times the impact of what these authors found the newspaper was.

So, send your Valentine's card, eCard, physical, and then worry about instead what you choose from the menu on your first date.

Diana - There are huge number of variables to consider, but here at the Naked Scientists, we like to go a bit deeper. In fact, we got the author of "Without Hot Air" and Chief Scientific Adviser to the Department for Energy and Climate Change - that's Cambridge University's Professor David Mackay - to work it out for us. Sadly, he was too busy with his myriad responsibilities to record it, so his answer is voiced by our very own Ben Valsler...

Ben - To calculate the energy cost of the eCard, we can add 1 minute of the sender's computer time, that's 2 x 100 watts x 60 seconds and that gives 12,000 joules. To be on the safe side, we add another 50% to allow for the internet energy cost, and that gives us a total cost of 18 kilojoules.

Moving on to the paper card, the card itself plus the envelope have a chemical energy of around 0.12 kilowatt hours. An educated guess says that the energy expended at the paper mill is likely to be similar, so that gives us 0.24 kilowatt hours. But then we can expect a fraction to be reclaimed if the card is recycled later. So let's round down and say the card costs about 0.2 kilowatt hours.

But there's still the cost of transport to the shop and through the postal system. What is the energy cost of picking up the card from the post box and sending it out across the country? Sharing equally between the postal items, the collection part of the journey costs 0.01 kilowatt hours per item. Then, the card has to travel a bit further. Let's say from Lancaster to Skegness via several depots, the answer is 0.009 kilowatt hours. Adding this figure to the collection cost gives roughly 0.02 kilowatt hours, that gives us a total value for the paper card of 0.22 kilowatt hours. However, the eCard only costs 18 kilojoules, which converts to 0.005 kilowatt hours. That's on the order of 40 times less.

- Previous Put your thinking cap on...

- Next Computer Microchips

Comments

Add a comment